Heuristic ranking science research results

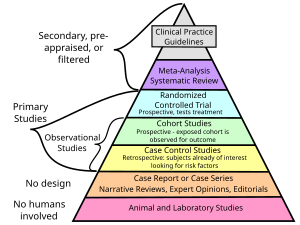

A hierarchy of evidence, comprising levels of evidence (LOEs), that is, evidence levels (ELs), is a heuristic used to rank the relative strength of results obtained from experimental research, especially medical research. There is broad agreement on the relative strength of large-scale, epidemiological studies. More than 80 different hierarchies have been proposed for assessing medical evidence.[1] The design of the study (such as a case report for an individual patient or a blinded randomized controlled trial) and the endpoints measured (such as survival or quality of life) affect the strength of the evidence. In clinical research, the best evidence for treatment efficacy is mainly from meta-analyses of randomized controlled trials (RCTs).[2][3] Systematic reviews of completed, high-quality randomized controlled trials – such as those published by the Cochrane Collaboration – rank the same as systematic review of completed high-quality observational studies in regard to the study of side effects.[4] Evidence hierarchies are often applied in evidence-based practices and are integral to evidence-based medicine (EBM).

Definition

In 2014, Jacob Stegenga defined a hierarchy of evidence as "rank-ordering of kinds of methods according to the potential for that method to suffer from systematic bias". At the top of the hierarchy is a method with the most freedom from systemic bias or best internal validity relative to the tested medical intervention's hypothesized efficacy.[5]: 313

In 1997, Greenhalgh suggested it was "the relative weight carried by the different types of primary study when making decisions about clinical interventions".[6]

The National Cancer Institute defines levels of evidence as "a ranking system used to describe the strength of the results measured in a clinical trial or research study. The design of the study ... and the endpoints measured ... affect the strength of the evidence."[7]

Examples

Canadian Association of Pharmacy in Oncology

[9]Example hierarchies of evidence in medicine

A large number of hierarchies of evidence have been proposed. Similar protocols for evaluation of research quality are still in development. So far, the available protocols pay relatively little attention to whether outcome research is relevant to efficacy (the outcome of a treatment performed under ideal conditions) or to effectiveness (the outcome of the treatment performed under ordinary, expectable conditions).[citation needed]

GRADE

The GRADE approach (Grading of Recommendations Assessment, Development and Evaluation) is a method of assessing the certainty in evidence (also known as quality of evidence or confidence in effect estimates) and the strength of recommendations.[10] The GRADE began in the year 2000 as a collaboration of methodologists, guideline developers, biostatisticians, clinicians, public health scientists and other interested members.[citation needed]

Over 100 organizations (including the World Health Organization, the UK National Institute for Health and Care Excellence (NICE), the Canadian Task Force for Preventive Health Care, the Colombian Ministry of Health, among others) have endorsed and/or are using GRADE to evaluate the quality of evidence and strength of health care recommendations. (See examples of clinical practice guidelines using GRADE online).[11][12]

GRADES rates quality of evidence as follows:[13][14]

| High |

There is a lot of confidence that the true effect lies close to that of the estimated effect.

|

| Moderate |

There is moderate confidence in the estimated effect: The true effect is likely to be close to the estimated effect, but there is a possibility that it is substantially different.

|

| Low |

There is limited confidence in the estimated effect: The true effect might be substantially different from the estimated effect.

|

| Very low |

There is very little confidence in the estimated effect: The true effect is likely to be substantially different from the estimated effect.

|

Guyatt and Sackett

In 1995, Guyatt and Sackett published the first such hierarchy.[15]

Greenhalgh put the different types of primary study in the following order:[6]

- Systematic reviews and meta-analyses of "RCTs with definitive results".

- RCTs with definitive results (confidence intervals that do not overlap the threshold clinically significant effect)

- RCTs with non-definitive results (a point estimate that suggests a clinically significant effect but with confidence intervals overlapping the threshold for this effect)

- Cohort studies

- Case–control studies

- Cross-sectional surveys

- Case reports

Saunders et al.

A protocol suggested by Saunders et al. assigns research reports to six categories, on the basis of research design, theoretical background, evidence of possible harm, and general acceptance. To be classified under this protocol, there must be descriptive publications, including a manual or similar description of the intervention. This protocol does not consider the nature of any comparison group, the effect of confounding variables, the nature of the statistical analysis, or a number of other criteria. Interventions are assessed as belonging to Category 1, well-supported, efficacious treatments, if there are two or more randomized controlled outcome studies comparing the target treatment to an appropriate alternative treatment and showing a significant advantage to the target treatment. Interventions are assigned to Category 2, supported and probably efficacious treatment, based on positive outcomes of nonrandomized designs with some form of control, which may involve a non-treatment group. Category 3, supported and acceptable treatment, includes interventions supported by one controlled or uncontrolled study, or by a series of single-subject studies, or by work with a different population than the one of interest. Category 4, promising and acceptable treatment, includes interventions that have no support except general acceptance and clinical anecdotal literature; however, any evidence of possible harm excludes treatments from this category. Category 5, innovative and novel treatment, includes interventions that are not thought to be harmful, but are not widely used or discussed in the literature. Category 6, concerning treatment, is the classification for treatments that have the possibility of doing harm, as well as having unknown or inappropriate theoretical foundations.[16]

Khan et al.

A protocol for evaluation of research quality was suggested by a report from the Centre for Reviews and Dissemination, prepared by Khan et al. and intended as a general method for assessing both medical and psychosocial interventions. While strongly encouraging the use of randomized designs, this protocol noted that such designs were useful only if they met demanding criteria, such as true randomization and concealment of the assigned treatment group from the client and from others, including the individuals assessing the outcome. The Khan et al. protocol emphasized the need to make comparisons on the basis of "intention to treat" in order to avoid problems related to greater attrition in one group. The Khan et al. protocol also presented demanding criteria for nonrandomized studies, including matching of groups on potential confounding variables and adequate descriptions of groups and treatments at every stage, and concealment of treatment choice from persons assessing the outcomes. This protocol did not provide a classification of levels of evidence, but included or excluded treatments from classification as evidence-based depending on whether the research met the stated standards.[17]

U.S. National Registry of Evidence-Based Practices and Programs

An assessment protocol has been developed by the U.S. National Registry of Evidence-Based Practices and Programs (NREPP). Evaluation under this protocol occurs only if an intervention has already had one or more positive outcomes, with a probability of less than .05, reported, if these have been published in a peer-reviewed journal or an evaluation report, and if documentation such as training materials has been made available. The NREPP evaluation, which assigns quality ratings from 0 to 4 to certain criteria, examines reliability and validity of outcome measures used in the research, evidence for intervention fidelity (predictable use of the treatment in the same way every time), levels of missing data and attrition, potential confounding variables, and the appropriateness of statistical handling, including sample size.[18]

History

Canada

The term was first used in a 1979 report by the "Canadian Task Force on the Periodic Health Examination" (CTF) to "grade the effectiveness of an intervention according to the quality of evidence obtained".[19]: 1195

The task force used three levels, subdividing level II:

- Level I: Evidence from at least one randomized controlled trial,

- Level II1: Evidence from at least one well designed cohort study or case control study, preferably from more than one center or research group.

- Level II2: Comparisons between times and places with or without the intervention

- Level III: Opinions of respected authorities, based on clinical experience, descriptive studies or reports of expert committees.

The CTF graded their recommendations into a 5-point A–E scale: A: Good level of evidence for the recommendation to consider a condition, B: Fair level of evidence for the recommendation to consider a condition, C: Poor level of evidence for the recommendation to consider a condition, D: Fair level evidence for the recommendation to exclude the condition, and E: Good level of evidence for the recommendation to exclude condition from consideration.[19]: 1195

The CTF updated their report in 1984,[20] in 1986[21] and 1987.[22]

United States

In 1988, the United States Preventive Services Task Force (USPSTF) came out with its guidelines based on the CTF using the same three levels, further subdividing level II.[23]

- Level I: Evidence obtained from at least one properly designed randomized controlled trial.

- Level II-1: Evidence obtained from well-designed controlled trials without randomization.

- Level II-2: Evidence obtained from well-designed cohort or case-control analytic studies, preferably from more than one center or research group.

- Level II-3: Evidence obtained from multiple time series designs with or without the intervention. Dramatic results in uncontrolled trials might also be regarded as this type of evidence.

- Level III: Opinions of respected authorities, based on clinical experience, descriptive studies, or reports of expert committees.

Over the years many more grading systems have been described.[24]

United Kingdom

In September 2000, the Oxford (UK) Centre for Evidence-Based Medicine (CEBM) Levels of Evidence published its guidelines for 'Levels' of evidence regarding claims about prognosis, diagnosis, treatment benefits, treatment harms, and screening. It not only addressed therapy and prevention, but also diagnostic tests, prognostic markers, or harm. The original CEBM Levels was first released for Evidence-Based On Call to make the process of finding evidence feasible and its results explicit. As published in 2009[25] they are:

- 1a: Systematic reviews (with homogeneity) of randomized controlled trials

- 1b: Individual randomized controlled trials (with narrow confidence interval)

- 1c: All or none (when all patients died before the treatment became available, but some now survive on it; or when some patients died before the treatment became available, but none now die on it.)

- 2a: Systematic reviews (with homogeneity) of cohort studies

- 2b: Individual cohort study or low quality randomized controlled trials (e.g. <80% follow-up)

- 2c: "Outcomes" Research; ecological studies

- 3a: Systematic review (with homogeneity) of case-control studies

- 3b: Individual case-control study

- 4: Case series (and poor quality cohort and case-control studies)

- 5: Expert opinion without explicit critical appraisal, or based on physiology, bench research or "first principles"

In 2011, an international team redesigned the Oxford CEBM Levels to make it more understandable and to take into account recent developments in evidence ranking schemes. The Levels have been used by patients, clinicians and also to develop clinical guidelines including recommendations for the optimal use of phototherapy and topical therapy in psoriasis[27] and guidelines for the use of the BCLC staging system for diagnosing and monitoring hepatocellular carcinoma in Canada.[28]

Global

In 2007, the World Cancer Research Fund grading system described 4 levels: Convincing, probable, possible and insufficient evidence.[29] All Global Burden of Disease Studies have used it to evaluate epidemiologic evidence supporting causal relationships.[30]

Proponents

In 1995 Wilson et al.,[31] in 1996 Hadorn et al.[32] and in 1996 Atkins et al.[33] have described and defended various types of grading systems.

Criticism

In 2011, a systematic review of the critical literature found three kinds of criticism: procedural aspects of EBM (especially from Cartwright, Worrall and Howick),[34] greater than expected fallibility of EBM (Ioaanidis and others), and EBM being incomplete as a philosophy of science (Ashcroft and others).[35][clarification needed] Rawlins[36] and Bluhm note, that EBM limits the ability of research results to inform the care of individual patients, and that to understand the causes of diseases both population-level and laboratory research are necessary. EBM hierarchy of evidence does not take into account research on the safety and efficacy of medical interventions. RCTs should be designed "to elucidate within-group variability, which can only be done if the hierarchy of evidence is replaced by a network that takes into account the relationship between epidemiological and laboratory research"[37]

The hierarchy of evidence produced by a study design has been questioned, because guidelines have "failed to properly define key terms, weight the merits of certain non-randomized controlled trials, and employ a comprehensive list of study design limitations".[38]

Stegenga has criticized specifically that meta-analyses are placed at the top of such hierarchies.[39] The assumption that RCTs ought to be necessarily near the top of such hierarchies has been criticized by Worrall[40] and Cartwright.[41]

In 2005, Ross Upshur said that EBM claims to be a normative guide to being a better physician, but is not a philosophical doctrine.[42]

Borgerson in 2009 wrote that the justifications for the hierarchy levels are not absolute and do not epistemically justify them, but that "medical researchers should pay closer attention to social mechanisms for managing pervasive biases".[43] La Caze noted that basic science resides on the lower tiers of EBM though it "plays a role in specifying experiments, but also analysing and interpreting the data."[44]

Concato said in 2004, that it allowed RCTs too much authority and that not all research questions could be answered through RCTs, either because of practical or because of ethical issues. Even when evidence is available from high-quality RCTs, evidence from other study types may still be relevant.[45] Stegenga opined that evidence assessment schemes are unreasonably constraining and less informative than other schemes now available.[5]

In his 2015 PhD Thesis dedicated to the study of the various hierarchies of evidence in medicine, Christopher J Blunt concludes that although modest interpretations such as those offered by La Caze's model, conditional hierarchies like GRADE, and heuristic approaches as defended by Howick et al all survive previous philosophical criticism, he argues that modest interpretations are so weak they are unhelpful for clinical practice. For example, "GRADE and similar conditional models omit clinically relevant information, such as information about variation in treatments' effects and the causes of different responses to therapy; and that heuristic approaches lack the necessary empirical support". Blunt further concludes that "hierarchies are a poor basis for the application of evidence in clinical practice", since the core assumptions behind hierarchies of evidence, that "information about average treatment effects backed by high-quality evidence can justify strong recommendations", is untenable, and hence the evidence from individuals studies should be appraised in isolation.[46]