| Complex systems |

|---|

| Topics |

In mathematics and science, a nonlinear system (or a non-linear system) is a system in which the change of the output is not proportional to the change of the input.[1][2] Nonlinear problems are of interest to engineers, biologists,[3][4][5] physicists,[6][7] mathematicians, and many other scientists since most systems are inherently nonlinear in nature.[8] Nonlinear dynamical systems, describing changes in variables over time, may appear chaotic, unpredictable, or counterintuitive, contrasting with much simpler linear systems.

Typically, the behavior of a nonlinear system is described in mathematics by a nonlinear system of equations, which is a set of simultaneous equations in which the unknowns (or the unknown functions in the case of differential equations) appear as variables of a polynomial of degree higher than one or in the argument of a function which is not a polynomial of degree one. In other words, in a nonlinear system of equations, the equation(s) to be solved cannot be written as a linear combination of the unknown variables or functions that appear in them. Systems can be defined as nonlinear, regardless of whether known linear functions appear in the equations. In particular, a differential equation is linear if it is linear in terms of the unknown function and its derivatives, even if nonlinear in terms of the other variables appearing in it.

As nonlinear dynamical equations are difficult to solve, nonlinear systems are commonly approximated by linear equations (linearization). This works well up to some accuracy and some range for the input values, but some interesting phenomena such as solitons, chaos,[9] and singularities are hidden by linearization. It follows that some aspects of the dynamic behavior of a nonlinear system can appear to be counterintuitive, unpredictable or even chaotic. Although such chaotic behavior may resemble random behavior, it is in fact not random. For example, some aspects of the weather are seen to be chaotic, where simple changes in one part of the system produce complex effects throughout. This nonlinearity is one of the reasons why accurate long-term forecasts are impossible with current technology.

Some authors use the term nonlinear science for the study of nonlinear systems. This term is disputed by others:

Using a term like nonlinear science is like referring to the bulk of zoology as the study of non-elephant animals.

Definition

[edit]In mathematics, a linear map (or linear function) is one which satisfies both of the following properties:

- Additivity or superposition principle:

- Homogeneity:

Additivity implies homogeneity for any rational α, and, for continuous functions, for any real α. For a complex α, homogeneity does not follow from additivity. For example, an antilinear map is additive but not homogeneous. The conditions of additivity and homogeneity are often combined in the superposition principle

An equation written as

is called linear if is a linear map (as defined above) and nonlinear otherwise. The equation is called homogeneous if and is a homogeneous function.

The definition is very general in that can be any sensible mathematical object (number, vector, function, etc.), and the function can literally be any mapping, including integration or differentiation with associated constraints (such as boundary values). If contains differentiation with respect to , the result will be a differential equation.

Nonlinear systems equations

[edit]A nonlinear system of equations consists of a set of equations in several variables such that at least one of them is not a linear equation.

For a single equation of the form many methods have been designed; see Root-finding algorithm. In the case where f is a polynomial, one has a polynomial equation such as The general root-finding algorithms apply to polynomial roots, but, generally they do not find all the roots, and when they fail to find a root, this does not imply that there is no roots. Specific methods for polynomials allow finding all roots or the real roots; see real-root isolation.

Solving systems of polynomial equations, that is finding the common zeros of a set of several polynomials in several variables is a difficult problem for which elaborated algorithms have been designed, such as Gröbner base algorithms.[11]

For the general case of system of equations formed by equating to zero several differentiable functions, the main method is Newton's method and its variants. Generally they may provide a solution, but do not provide any information on the number of solutions.

Nonlinear recurrence relations

[edit]A nonlinear recurrence relation defines successive terms of a sequence as a nonlinear function of preceding terms. Examples of nonlinear recurrence relations are the logistic map and the relations that define the various Hofstadter sequences. Nonlinear discrete models that represent a wide class of nonlinear recurrence relationships include the NARMAX (Nonlinear Autoregressive Moving Average with eXogenous inputs) model and the related nonlinear system identification and analysis procedures.[12] These approaches can be used to study a wide class of complex nonlinear behaviors in the time, frequency, and spatio-temporal domains.

Nonlinear differential equations

[edit]A system of differential equations is said to be nonlinear if it is not a system of linear equations. Problems involving nonlinear differential equations are extremely diverse, and methods of solution or analysis are problem dependent. Examples of nonlinear differential equations are the Navier–Stokes equations in fluid dynamics and the Lotka–Volterra equations in biology.

One of the greatest difficulties of nonlinear problems is that it is not generally possible to combine known solutions into new solutions. In linear problems, for example, a family of linearly independent solutions can be used to construct general solutions through the superposition principle. A good example of this is one-dimensional heat transport with Dirichlet boundary conditions, the solution of which can be written as a time-dependent linear combination of sinusoids of differing frequencies; this makes solutions very flexible. It is often possible to find several very specific solutions to nonlinear equations, however the lack of a superposition principle prevents the construction of new solutions.

Ordinary differential equations

[edit]First order ordinary differential equations are often exactly solvable by separation of variables, especially for autonomous equations. For example, the nonlinear equation

has as a general solution (and also the special solution corresponding to the limit of the general solution when C tends to infinity). The equation is nonlinear because it may be written as

and the left-hand side of the equation is not a linear function of and its derivatives. Note that if the term were replaced with , the problem would be linear (the exponential decay problem).

Second and higher order ordinary differential equations (more generally, systems of nonlinear equations) rarely yield closed-form solutions, though implicit solutions and solutions involving nonelementary integrals are encountered.

Common methods for the qualitative analysis of nonlinear ordinary differential equations include:

- Examination of any conserved quantities, especially in Hamiltonian systems

- Examination of dissipative quantities (see Lyapunov function) analogous to conserved quantities

- Linearization via Taylor expansion

- Change of variables into something easier to study

- Bifurcation theory

- Perturbation methods (can be applied to algebraic equations too)

- Existence of solutions of Finite-Duration,[13] which can happen under specific conditions for some non-linear ordinary differential equations.

Partial differential equations

[edit]The most common basic approach to studying nonlinear partial differential equations is to change the variables (or otherwise transform the problem) so that the resulting problem is simpler (possibly linear). Sometimes, the equation may be transformed into one or more ordinary differential equations, as seen in separation of variables, which is always useful whether or not the resulting ordinary differential equation(s) is solvable.

Another common (though less mathematical) tactic, often exploited in fluid and heat mechanics, is to use scale analysis to simplify a general, natural equation in a certain specific boundary value problem. For example, the (very) nonlinear Navier-Stokes equations can be simplified into one linear partial differential equation in the case of transient, laminar, one dimensional flow in a circular pipe; the scale analysis provides conditions under which the flow is laminar and one dimensional and also yields the simplified equation.

Other methods include examining the characteristics and using the methods outlined above for ordinary differential equations.

Pendula

[edit]

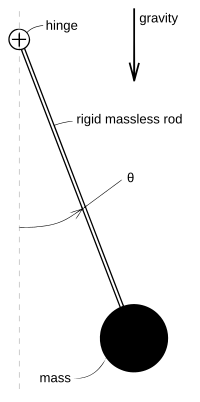

A classic, extensively studied nonlinear problem is the dynamics of a frictionless pendulum under the influence of gravity. Using Lagrangian mechanics, it may be shown[14] that the motion of a pendulum can be described by the dimensionless nonlinear equation

where gravity points "downwards" and is the angle the pendulum forms with its rest position, as shown in the figure at right. One approach to "solving" this equation is to use as an integrating factor, which would eventually yield

which is an implicit solution involving an elliptic integral. This "solution" generally does not have many uses because most of the nature of the solution is hidden in the nonelementary integral (nonelementary unless ).

Another way to approach the problem is to linearize any nonlinearity (the sine function term in this case) at the various points of interest through Taylor expansions. For example, the linearization at , called the small angle approximation, is

since for . This is a simple harmonic oscillator corresponding to oscillations of the pendulum near the bottom of its path. Another linearization would be at , corresponding to the pendulum being straight up:

since for . The solution to this problem involves hyperbolic sinusoids, and note that unlike the small angle approximation, this approximation is unstable, meaning that will usually grow without limit, though bounded solutions are possible. This corresponds to the difficulty of balancing a pendulum upright, it is literally an unstable state.

One more interesting linearization is possible around , around which :

This corresponds to a free fall problem. A very useful qualitative picture of the pendulum's dynamics may be obtained by piecing together such linearizations, as seen in the figure at right. Other techniques may be used to find (exact) phase portraits and approximate periods.

Types of nonlinear dynamic behaviors

[edit]- Amplitude death – any oscillations present in the system cease due to some kind of interaction with other system or feedback by the same system

- Chaos – values of a system cannot be predicted indefinitely far into the future, and fluctuations are aperiodic

- Multistability – the presence of two or more stable states

- Solitons – self-reinforcing solitary waves

- Limit cycles – asymptotic periodic orbits to which destabilized fixed points are attracted.

- Self-oscillations – feedback oscillations taking place in open dissipative physical systems.

Examples of nonlinear equations

[edit]- Algebraic Riccati equation

- Ball and beam system

- Bellman equation for optimal policy

- Boltzmann equation

- Colebrook equation

- General relativity

- Ginzburg–Landau theory

- Ishimori equation

- Kadomtsev–Petviashvili equation

- Korteweg–de Vries equation

- Landau–Lifshitz–Gilbert equation

- Liénard equation

- Navier–Stokes equations of fluid dynamics

- Nonlinear optics

- Nonlinear Schrödinger equation

- Power-flow study

- Richards equation for unsaturated water flow

- Self-balancing unicycle

- Sine-Gordon equation

- Van der Pol oscillator

- Vlasov equation

See also

[edit]References

[edit]- ^ "Explained: Linear and nonlinear systems". MIT News. Retrieved 2018-06-30.

- ^ "Nonlinear systems, Applied Mathematics - University of Birmingham". www.birmingham.ac.uk. Retrieved 2018-06-30.

- ^ "Nonlinear Biology", The Nonlinear Universe, The Frontiers Collection, Springer Berlin Heidelberg, 2007, pp. 181–276, doi:10.1007/978-3-540-34153-6_7, ISBN 9783540341529

- ^ Korenberg, Michael J.; Hunter, Ian W. (March 1996). "The identification of nonlinear biological systems: Volterra kernel approaches". Annals of Biomedical Engineering. 24 (2): 250–268. doi:10.1007/bf02667354. ISSN 0090-6964. PMID 8678357. S2CID 20643206.

- ^ Mosconi, Francesco; Julou, Thomas; Desprat, Nicolas; Sinha, Deepak Kumar; Allemand, Jean-François; Vincent Croquette; Bensimon, David (2008). "Some nonlinear challenges in biology". Nonlinearity. 21 (8): T131. Bibcode:2008Nonli..21..131M. doi:10.1088/0951-7715/21/8/T03. ISSN 0951-7715. S2CID 119808230.

- ^ Gintautas, V. (2008). "Resonant forcing of nonlinear systems of differential equations". Chaos. 18 (3): 033118. arXiv:0803.2252. Bibcode:2008Chaos..18c3118G. doi:10.1063/1.2964200. PMID 19045456. S2CID 18345817.

- ^ Stephenson, C.; et., al. (2017). "Topological properties of a self-assembled electrical network via ab initio calculation". Sci. Rep. 7: 41621. Bibcode:2017NatSR...741621S. doi:10.1038/srep41621. PMC 5290745. PMID 28155863.

- ^ de Canete, Javier, Cipriano Galindo, and Inmaculada Garcia-Moral (2011). System Engineering and Automation: An Interactive Educational Approach. Berlin: Springer. p. 46. ISBN 978-3642202292. Retrieved 20 January 2018.

((cite book)): CS1 maint: multiple names: authors list (link) - ^ Nonlinear Dynamics I: Chaos Archived 2008-02-12 at the Wayback Machine at MIT's OpenCourseWare

- ^ Campbell, David K. (25 November 2004). "Nonlinear physics: Fresh breather". Nature. 432 (7016): 455–456. Bibcode:2004Natur.432..455C. doi:10.1038/432455a. ISSN 0028-0836. PMID 15565139. S2CID 4403332.

- ^ Lazard, D. (2009). "Thirty years of Polynomial System Solving, and now?". Journal of Symbolic Computation. 44 (3): 222–231. doi:10.1016/j.jsc.2008.03.004.

- ^ Billings S.A. "Nonlinear System Identification: NARMAX Methods in the Time, Frequency, and Spatio-Temporal Domains". Wiley, 2013

- ^ Vardia T. Haimo (1985). "Finite Time Differential Equations". 1985 24th IEEE Conference on Decision and Control. pp. 1729–1733. doi:10.1109/CDC.1985.268832. S2CID 45426376.

- ^ David Tong: Lectures on Classical Dynamics

Further reading

[edit]- Diederich Hinrichsen and Anthony J. Pritchard (2005). Mathematical Systems Theory I - Modelling, State Space Analysis, Stability and Robustness. Springer Verlag. ISBN 9783540441250.

- Jordan, D. W.; Smith, P. (2007). Nonlinear Ordinary Differential Equations (fourth ed.). Oxford University Press. ISBN 978-0-19-920824-1.

- Khalil, Hassan K. (2001). Nonlinear Systems. Prentice Hall. ISBN 978-0-13-067389-3.

- Kreyszig, Erwin (1998). Advanced Engineering Mathematics. Wiley. ISBN 978-0-471-15496-9.

- Sontag, Eduardo (1998). Mathematical Control Theory: Deterministic Finite Dimensional Systems. Second Edition. Springer. ISBN 978-0-387-98489-6.

External links

[edit]- Command and Control Research Program (CCRP)

- New England Complex Systems Institute: Concepts in Complex Systems

- Nonlinear Dynamics I: Chaos at MIT's OpenCourseWare

- Nonlinear Model Library – (in MATLAB) a Database of Physical Systems

- The Center for Nonlinear Studies at Los Alamos National Laboratory

| Classification |

| ||||||

|---|---|---|---|---|---|---|---|

| Solutions | |||||||

| Examples | |||||||

| Mathematicians | |||||||

| Background | |

|---|---|

| Collective behavior | |

| Evolution and adaptation | |

| Game theory | |

| Networks | |

| Nonlinear dynamics | |

| Pattern formation | |

| Systems theory | |