Notation and examples

For example, suppose that four numbers are observed or recorded, resulting in a sample of size 4. If the sample values are

- 6, 9, 3, 8,

the order statistics would be denoted

where the subscript (i) enclosed in parentheses indicates the ith order statistic of the sample.

The first order statistic (or smallest order statistic) is always the minimum of the sample, that is,

where, following a common convention, we use upper-case letters to refer to random variables, and lower-case letters (as above) to refer to their actual observed values.

Similarly, for a sample of size n, the nth order statistic (or largest order statistic) is the maximum, that is,

The sample range is the difference between the maximum and minimum. It is a function of the order statistics:

A similar important statistic in exploratory data analysis that is simply related to the order statistics is the sample interquartile range.

The sample median may or may not be an order statistic, since there is a single middle value only when the number n of observations is odd. More precisely, if n = 2m+1 for some integer m, then the sample median is  and so is an order statistic. On the other hand, when n is even, n = 2m and there are two middle values,

and so is an order statistic. On the other hand, when n is even, n = 2m and there are two middle values,  and

and  , and the sample median is some function of the two (usually the average) and hence not an order statistic. Similar remarks apply to all sample quantiles.

, and the sample median is some function of the two (usually the average) and hence not an order statistic. Similar remarks apply to all sample quantiles.

Probabilistic analysis

Given any random variables X1, X2..., Xn, the order statistics X(1), X(2), ..., X(n) are also random variables, defined by sorting the values (realizations) of X1, ..., Xn in increasing order.

When the random variables X1, X2..., Xn form a sample they are independent and identically distributed. This is the case treated below. In general, the random variables X1, ..., Xn can arise by sampling from more than one population. Then they are independent, but not necessarily identically distributed, and their joint probability distribution is given by the Bapat–Beg theorem.

From now on, we will assume that the random variables under consideration are continuous and, where convenient, we will also assume that they have a probability density function (PDF), that is, they are absolutely continuous. The peculiarities of the analysis of distributions assigning mass to points (in particular, discrete distributions) are discussed at the end.

Cumulative distribution function of order statistics

For a random sample as above, with cumulative distribution  , the order statistics for that sample have cumulative distributions as follows[2]

(where r specifies which order statistic):

, the order statistics for that sample have cumulative distributions as follows[2]

(where r specifies which order statistic):

![{\displaystyle F_{X_{(r)))(x)=\sum _{j=r}^{n}{\binom {n}{j))[F_{X}(x)]^{j}[1-F_{X}(x)]^{n-j))](https://wikimedia.org/api/rest_v1/media/math/render/svg/83743dea76239b9e15addd74a877f0c3b51ac769)

the corresponding probability density function may be derived from this result, and is found to be

![{\displaystyle f_{X_{(r)))(x)={\frac {n!}{(r-1)!(n-r)!))f_{X}(x)[F_{X}(x)]^{r-1}[1-F_{X}(x)]^{n-r}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0bfc29ad37f782caf50c1fab6d501876a397a9a2)

Moreover, there are two special cases, which have CDFs that are easy to compute.

![{\displaystyle F_{X_{(n)))(x)=\operatorname {Prob} (\max\{\,X_{1},\ldots ,X_{n}\,\}\leq x)=[F_{X}(x)]^{n))](https://wikimedia.org/api/rest_v1/media/math/render/svg/892b889a61d2577115a5d8c8ea010aaa6a03e840)

![{\displaystyle F_{X_{(1)))(x)=\operatorname {Prob} (\min\{\,X_{1},\ldots ,X_{n}\,\}\leq x)=1-[1-F_{X}(x)]^{n))](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4600e4a349458eb8c93b2bcdc7dd7e10f491423)

Which can be derived by careful consideration of probabilities.

Probability distributions of order statistics

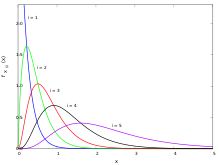

Order statistics sampled from a uniform distribution

In this section we show that the order statistics of the uniform distribution on the unit interval have marginal distributions belonging to the beta distribution family. We also give a simple method to derive the joint distribution of any number of order statistics, and finally translate these results to arbitrary continuous distributions using the cdf.

We assume throughout this section that  is a random sample drawn from a continuous distribution with cdf

is a random sample drawn from a continuous distribution with cdf  . Denoting

. Denoting  we obtain the corresponding random sample

we obtain the corresponding random sample  from the standard uniform distribution. Note that the order statistics also satisfy

from the standard uniform distribution. Note that the order statistics also satisfy  .

.

The probability density function of the order statistic  is equal to[3]

is equal to[3]

that is, the kth order statistic of the uniform distribution is a beta-distributed random variable.[3][4]

The proof of these statements is as follows. For  to be between u and u + du, it is necessary that exactly k − 1 elements of the sample are smaller than u, and that at least one is between u and u + du. The probability that more than one is in this latter interval is already

to be between u and u + du, it is necessary that exactly k − 1 elements of the sample are smaller than u, and that at least one is between u and u + du. The probability that more than one is in this latter interval is already  , so we have to calculate the probability that exactly k − 1, 1 and n − k observations fall in the intervals

, so we have to calculate the probability that exactly k − 1, 1 and n − k observations fall in the intervals  ,

,  and

and  respectively. This equals (refer to multinomial distribution for details)

respectively. This equals (refer to multinomial distribution for details)

and the result follows.

The mean of this distribution is k / (n + 1).

The joint distribution of the order statistics of the uniform distribution

Similarly, for i < j, the joint probability density function of the two order statistics U(i) < U(j) can be shown to be

which is (up to terms of higher order than  ) the probability that i − 1, 1, j − 1 − i, 1 and n − j sample elements fall in the intervals

) the probability that i − 1, 1, j − 1 − i, 1 and n − j sample elements fall in the intervals  ,

,  ,

,  ,

,  ,

,  respectively.

respectively.

One reasons in an entirely analogous way to derive the higher-order joint distributions. Perhaps surprisingly, the joint density of the n order statistics turns out to be constant:

One way to understand this is that the unordered sample does have constant density equal to 1, and that there are n! different permutations of the sample corresponding to the same sequence of order statistics. This is related to the fact that 1/n! is the volume of the region  . It is also related with another particularity of order statistics of uniform random variables: It follows from the BRS-inequality that the maximum expected number of uniform U(0,1] random variables one can choose from a sample of size n with a sum up not exceeding

. It is also related with another particularity of order statistics of uniform random variables: It follows from the BRS-inequality that the maximum expected number of uniform U(0,1] random variables one can choose from a sample of size n with a sum up not exceeding  is bounded above by

is bounded above by

, which is thus invariant on the set of all

, which is thus invariant on the set of all  with constant product

with constant product  .

.

Using the above formulas, one can derive the distribution of the range of the order statistics, that is the distribution of  , i.e. maximum minus the minimum. More generally, for

, i.e. maximum minus the minimum. More generally, for  ,

,  also has a beta distribution:

also has a beta distribution:

, which is the actual distribution of the difference.

, which is the actual distribution of the difference.

Order statistics sampled from an exponential distribution

For  a random sample of size n from an exponential distribution with parameter λ, the order statistics X(i) for i = 1,2,3, ..., n each have distribution

a random sample of size n from an exponential distribution with parameter λ, the order statistics X(i) for i = 1,2,3, ..., n each have distribution

where the Zj are iid standard exponential random variables (i.e. with rate parameter 1). This result was first published by Alfréd Rényi.[5][6]

Order statistics sampled from an Erlang distribution

The Laplace transform of order statistics may be sampled from an Erlang distribution via a path counting method [clarification needed].[7]

The joint distribution of the order statistics of an absolutely continuous distribution

If FX is absolutely continuous, it has a density such that  , and we can use the substitutions

, and we can use the substitutions

and

to derive the following probability density functions for the order statistics of a sample of size n drawn from the distribution of X:

![{\displaystyle f_{X_{(k)))(x)={\frac {n!}{(k-1)!(n-k)!))[F_{X}(x)]^{k-1}[1-F_{X}(x)]^{n-k}f_{X}(x)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a3b85adac3788d1a67f96c80edfc10ad56cc8dba)

![{\displaystyle f_{X_{(j)},X_{(k)))(x,y)={\frac {n!}{(j-1)!(k-j-1)!(n-k)!))[F_{X}(x)]^{j-1}[F_{X}(y)-F_{X}(x)]^{k-1-j}[1-F_{X}(y)]^{n-k}f_{X}(x)f_{X}(y)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7a57558c8a25cfa2a2648f386caa9679006499df) where

where

where

where

Application: confidence intervals for quantiles

An interesting question is how well the order statistics perform as estimators of the quantiles of the underlying distribution.

A small-sample-size example

The simplest case to consider is how well the sample median estimates the population median.

As an example, consider a random sample of size 6. In that case, the sample median is usually defined as the midpoint of the interval delimited by the 3rd and 4th order statistics. However, we know from the preceding discussion that the probability that this interval actually contains the population median is [clarification needed]

Although the sample median is probably among the best distribution-independent point estimates of the population median, what this example illustrates is that it is not a particularly good one in absolute terms. In this particular case, a better confidence interval for the median is the one delimited by the 2nd and 5th order statistics, which contains the population median with probability

^{6}={25 \over 32}\approx 78\%.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/092bfad29672b7903b0d5df1efb34f6c30c85c09)

With such a small sample size, if one wants at least 95% confidence, one is reduced to saying that the median is between the minimum and the maximum of the 6 observations with probability 31/32 or approximately 97%. Size 6 is, in fact, the smallest sample size such that the interval determined by the minimum and the maximum is at least a 95% confidence interval for the population median.

Large sample sizes

For the uniform distribution, as n tends to infinity, the pth sample quantile is asymptotically normally distributed, since it is approximated by

For a general distribution F with a continuous non-zero density at F −1(p), a similar asymptotic normality applies:

![{\displaystyle X_{(\lceil np\rceil )}\sim AN\left(F^{-1}(p),{\frac {p(1-p)}{n[f(F^{-1}(p))]^{2))}\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9ec5ea20cea909919df56456bd279b4c26c1091b)

where f is the density function, and F −1 is the quantile function associated with F. One of the first people to mention and prove this result was Frederick Mosteller in his seminal paper in 1946.[8] Further research led in the 1960s to the Bahadur representation which provides information about the errorbounds. The convergence to normal distribution also holds in a stronger sense, such as convergence in relative entropy or KL divergence.[9]

An interesting observation can be made in the case where the distribution is symmetric, and the population median equals the population mean. In this case, the sample mean, by the central limit theorem, is also asymptotically normally distributed, but with variance σ2/n instead. This asymptotic analysis suggests that the mean outperforms the median in cases of low kurtosis, and vice versa. For example, the median achieves better confidence intervals for the Laplace distribution, while the mean performs better for X that are normally distributed.

Proof

It can be shown that

where

with Zi being independent identically distributed exponential random variables with rate 1. Since X/n and Y/n are asymptotically normally distributed by the CLT, our results follow by application of the delta method.

Application: Non-parametric density estimation

Moments of the distribution for the first order statistic can be used to develop a non-parametric density estimator.[10] Suppose, we want to estimate the density  at the point

at the point  . Consider the random variables

. Consider the random variables  , which are i.i.d with distribution function

, which are i.i.d with distribution function  . In particular,

. In particular,  .

.

The expected value of the first order statistic  given a sample of

given a sample of  total observations yields,

total observations yields,

where  is the quantile function associated with the distribution

is the quantile function associated with the distribution  , and

, and  . This equation in combination with a jackknifing technique becomes the basis for the following density estimation algorithm,

. This equation in combination with a jackknifing technique becomes the basis for the following density estimation algorithm,

Input: A sample of  observations.

observations.  points of density evaluation. Tuning parameter

points of density evaluation. Tuning parameter  (usually 1/3).

Output:

(usually 1/3).

Output:  estimated density at the points of evaluation.

estimated density at the points of evaluation.

1: Set  2: Set

2: Set  3: Create an

3: Create an  matrix

matrix  which holds

which holds  subsets with

subsets with  observations each.

4: Create a vector

observations each.

4: Create a vector  to hold the density evaluations.

5: for

to hold the density evaluations.

5: for  do

6: for

do

6: for  do

7: Find the nearest distance

do

7: Find the nearest distance  to the current point

to the current point  within the

within the  th subset

8: end for

9: Compute the subset average of distances to

th subset

8: end for

9: Compute the subset average of distances to  10: Compute the density estimate at

10: Compute the density estimate at  11: end for

12: return

11: end for

12: return

In contrast to the bandwidth/length based tuning parameters for histogram and kernel based approaches, the tuning parameter for the order statistic based density estimator is the size of sample subsets. Such an estimator is more robust than histogram and kernel based approaches, for example densities like the Cauchy distribution (which lack finite moments) can be inferred without the need for specialized modifications such as IQR based bandwidths. This is because the first moment of the order statistic always exists if the expected value of the underlying distribution does, but the converse is not necessarily true.[11]

Dealing with discrete variables

Suppose  are i.i.d. random variables from a discrete distribution with cumulative distribution function

are i.i.d. random variables from a discrete distribution with cumulative distribution function  and probability mass function

and probability mass function  . To find the probabilities of the

. To find the probabilities of the  order statistics, three values are first needed, namely

order statistics, three values are first needed, namely

The cumulative distribution function of the  order statistic can be computed by noting that

order statistic can be computed by noting that

Similarly,  is given by

is given by

Note that the probability mass function of  is just the difference of these values, that is to say

is just the difference of these values, that is to say

![{\displaystyle F_{X_{(r)))(x)=\sum _{j=r}^{n}{\binom {n}{j))[F_{X}(x)]^{j}[1-F_{X}(x)]^{n-j))](https://wikimedia.org/api/rest_v1/media/math/render/svg/83743dea76239b9e15addd74a877f0c3b51ac769)

![{\displaystyle f_{X_{(r)))(x)={\frac {n!}{(r-1)!(n-r)!))f_{X}(x)[F_{X}(x)]^{r-1}[1-F_{X}(x)]^{n-r}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0bfc29ad37f782caf50c1fab6d501876a397a9a2)

![{\displaystyle F_{X_{(n)))(x)=\operatorname {Prob} (\max\{\,X_{1},\ldots ,X_{n}\,\}\leq x)=[F_{X}(x)]^{n))](https://wikimedia.org/api/rest_v1/media/math/render/svg/892b889a61d2577115a5d8c8ea010aaa6a03e840)

![{\displaystyle F_{X_{(1)))(x)=\operatorname {Prob} (\min\{\,X_{1},\ldots ,X_{n}\,\}\leq x)=1-[1-F_{X}(x)]^{n))](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4600e4a349458eb8c93b2bcdc7dd7e10f491423)

![{\displaystyle f_{X_{(k)))(x)={\frac {n!}{(k-1)!(n-k)!))[F_{X}(x)]^{k-1}[1-F_{X}(x)]^{n-k}f_{X}(x)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a3b85adac3788d1a67f96c80edfc10ad56cc8dba)

![{\displaystyle f_{X_{(j)},X_{(k)))(x,y)={\frac {n!}{(j-1)!(k-j-1)!(n-k)!))[F_{X}(x)]^{j-1}[F_{X}(y)-F_{X}(x)]^{k-1-j}[1-F_{X}(y)]^{n-k}f_{X}(x)f_{X}(y)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7a57558c8a25cfa2a2648f386caa9679006499df)

^{6}={25 \over 32}\approx 78\%.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/092bfad29672b7903b0d5df1efb34f6c30c85c09)

![{\displaystyle X_{(\lceil np\rceil )}\sim AN\left(F^{-1}(p),{\frac {p(1-p)}{n[f(F^{-1}(p))]^{2))}\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9ec5ea20cea909919df56456bd279b4c26c1091b)