Types of graphical models

Generally, probabilistic graphical models use a graph-based representation as the foundation for encoding a distribution over a multi-dimensional space and a graph that is a compact or factorized representation of a set of independences that hold in the specific distribution. Two branches of graphical representations of distributions are commonly used, namely, Bayesian networks and Markov random fields. Both families encompass the properties of factorization and independences, but they differ in the set of independences they can encode and the factorization of the distribution that they induce.[1]

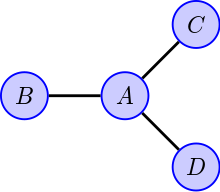

Undirected Graphical Model

The undirected graph shown may have one of several interpretations; the common feature is that the presence of an edge implies some sort of dependence between the corresponding random variables. From this graph we might deduce that are all mutually independent, once is known, or (equivalently in this case) that

for some non-negative functions .

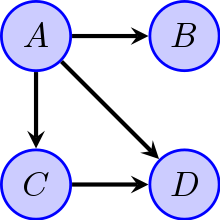

Bayesian network

|

Main article: Bayesian network |

If the network structure of the model is a directed acyclic graph, the model represents a factorization of the joint probability of all random variables. More precisely, if the events are then the joint probability satisfies

where is the set of parents of node (nodes with edges directed towards ). In other words, the joint distribution factors into a product of conditional distributions. For example, in the directed acyclic graph shown in the Figure this factorization would be

- .

Any two nodes are conditionally independent given the values of their parents. In general, any two sets of nodes are conditionally independent given a third set if a criterion called d-separation holds in the graph. Local independences and global independences are equivalent in Bayesian networks.

This type of graphical model is known as a directed graphical model, Bayesian network, or belief network. Classic machine learning models like hidden Markov models, neural networks and newer models such as variable-order Markov models can be considered special cases of Bayesian networks.

One of the simplest Bayesian Networks is the Naive Bayes classifier.

Cyclic Directed Graphical Models

The next figure depicts a graphical model with a cycle. This may be interpreted in terms of each variable 'depending' on the values of its parents in some manner. The particular graph shown suggests a joint probability density that factors as

- ,

but other interpretations are possible. [2]

Other types

- Dependency network where cycles are allowed

- Tree-augmented classifier or TAN model

- Targeted Bayesian network learning (TBNL)

TBNL model for "corral dataset" - A factor graph is an undirected bipartite graph connecting variables and factors. Each factor represents a function over the variables it is connected to. This is a helpful representation for understanding and implementing belief propagation.

- A clique tree or junction tree is a tree of cliques, used in the junction tree algorithm.

- A chain graph is a graph which may have both directed and undirected edges, but without any directed cycles (i.e. if we start at any vertex and move along the graph respecting the directions of any arrows, we cannot return to the vertex we started from if we have passed an arrow). Both directed acyclic graphs and undirected graphs are special cases of chain graphs, which can therefore provide a way of unifying and generalizing Bayesian and Markov networks.[3]

- An ancestral graph is a further extension, having directed, bidirected and undirected edges.[4]

- Random field techniques

- A Markov random field, also known as a Markov network, is a model over an undirected graph. A graphical model with many repeated subunits can be represented with plate notation.

- A conditional random field is a discriminative model specified over an undirected graph.

- A restricted Boltzmann machine is a bipartite generative model specified over an undirected graph.

![{\displaystyle P[A,B,C,D]=f_{AB}[A,B]\cdot f_{AC}[A,C]\cdot f_{AD}[A,D]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c64734658a77405544a6b6ba63d942e1d26359e8)

![{\displaystyle P[X_{1},\ldots ,X_{n}]=\prod _{i=1}^{n}P[X_{i}|{\text{pa))(X_{i})]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/67d249e0dd3e93ff25c04e48dca06c753963f80b)

![{\displaystyle P[A,B,C,D]=P[A]\cdot P[B|A]\cdot P[C|A]\cdot P[D|A,C]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6327d7569c16f6222608f96ec4522fd015cfaeb6)

![{\displaystyle P[A,B,C,D]=P[A]\cdot P[B]\cdot P[C,D|A,B]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/646977af1489b1ac6bbe1b764335f9361c93ce48)