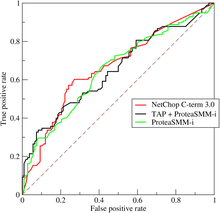

A receiver operating characteristic curve, or ROC curve, is a graphical plot that illustrates the performance of a binary classifier model (can be used for multi class classification as well) at varying threshold values.

The ROC curve is the plot of the true positive rate (TPR) against the false positive rate (FPR) at each threshold setting.

The ROC can also be thought of as a plot of the statistical power as a function of the Type I Error of the decision rule (when the performance is calculated from just a sample of the population, it can be thought of as estimators of these quantities). The ROC curve is thus the sensitivity or recall as a function of false positive rate.

Given the probability distributions for both true positive and false positive are known, the ROC curve is obtained as the cumulative distribution function (CDF, area under the probability distribution from to the discrimination threshold) of the detection probability in the y-axis versus the CDF of the false positive probability on the x-axis.

ROC analysis provides tools to select possibly optimal models and to discard suboptimal ones independently from (and prior to specifying) the cost context or the class distribution. ROC analysis is related in a direct and natural way to the cost/benefit analysis of diagnostic decision making.

Terminology

[edit]The true-positive rate is also known as sensitivity, recall or probability of detection.[1] The false-positive rate is also known as the probability of false alarm[1] and equals (1 − specificity). The ROC is also known as a relative operating characteristic curve, because it is a comparison of two operating characteristics (TPR and FPR) as the criterion changes.[2]

History

[edit]The ROC curve was first developed by electrical engineers and radar engineers during World War II for detecting enemy objects in battlefields, starting in 1941, which led to its name ("receiver operating characteristic").[3]

It was soon introduced to psychology to account for the perceptual detection of stimuli. ROC analysis has been used in medicine, radiology, biometrics, forecasting of natural hazards,[4] meteorology,[5] model performance assessment,[6] and other areas for many decades and is increasingly used in machine learning and data mining research.

Basic concept

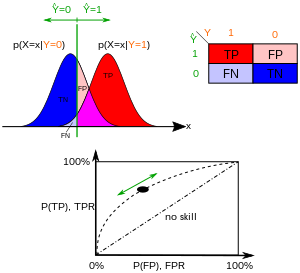

[edit]A classification model (classifier or diagnosis[7]) is a mapping of instances between certain classes/groups. Because the classifier or diagnosis result can be an arbitrary real value (continuous output), the classifier boundary between classes must be determined by a threshold value (for instance, to determine whether a person has hypertension based on a blood pressure measure). Or it can be a discrete class label, indicating one of the classes.

Consider a two-class prediction problem (binary classification), in which the outcomes are labeled either as positive (p) or negative (n). There are four possible outcomes from a binary classifier. If the outcome from a prediction is p and the actual value is also p, then it is called a true positive (TP); however if the actual value is n then it is said to be a false positive (FP). Conversely, a true negative (TN) has occurred when both the prediction outcome and the actual value are n, and a false negative (FN) is when the prediction outcome is n while the actual value is p.

To get an appropriate example in a real-world problem, consider a diagnostic test that seeks to determine whether a person has a certain disease. A false positive in this case occurs when the person tests positive, but does not actually have the disease. A false negative, on the other hand, occurs when the person tests negative, suggesting they are healthy, when they actually do have the disease.

Consider an experiment from P positive instances and N negative instances for some condition. The four outcomes can be formulated in a 2×2 contingency table or confusion matrix, as follows:

| Predicted condition | Sources: [8][9] [10][11][12][13][14][15] | ||||

| Total population = P + N |

Predicted Positive (PP) | Predicted Negative (PN) | Informedness, bookmaker informedness (BM) = TPR + TNR − 1 |

Prevalence threshold (PT) = √TPR × FPR - FPR/TPR - FPR | |

Actual condition

|

Positive (P) [a] | True positive (TP), hit[b] |

False negative (FN), miss, underestimation |

True positive rate (TPR), recall, sensitivity (SEN), probability of detection, hit rate, power = TP/P = 1 − FNR |

False negative rate (FNR), miss rate type II error [c] = FN/P = 1 − TPR |

| Negative (N)[d] | False positive (FP), false alarm, overestimation |

True negative (TN), correct rejection[e] |

False positive rate (FPR), probability of false alarm, fall-out type I error [f] = FP/N = 1 − TNR |

True negative rate (TNR), specificity (SPC), selectivity = TN/N = 1 − FPR | |

| Prevalence = P/P + N |

Positive predictive value (PPV), precision = TP/PP = 1 − FDR |

False omission rate (FOR) = FN/PN = 1 − NPV |

Positive likelihood ratio (LR+) = TPR/FPR |

Negative likelihood ratio (LR−) = FNR/TNR | |

| Accuracy (ACC) = TP + TN/P + N |

False discovery rate (FDR) = FP/PP = 1 − PPV |

Negative predictive value (NPV) = TN/PN = 1 − FOR |

Markedness (MK), deltaP (Δp) = PPV + NPV − 1 |

Diagnostic odds ratio (DOR) = LR+/LR− | |

| Balanced accuracy (BA) = TPR + TNR/2 |

F1 score = 2 PPV × TPR/PPV + TPR = 2 TP/2 TP + FP + FN |

Fowlkes–Mallows index (FM) = √PPV × TPR |

Matthews correlation coefficient (MCC) = √TPR × TNR × PPV × NPV - √FNR × FPR × FOR × FDR |

Threat score (TS), critical success index (CSI), Jaccard index = TP/TP + FN + FP | |

- ^ the number of real positive cases in the data

- ^ A test result that correctly indicates the presence of a condition or characteristic

- ^ Type II error: A test result which wrongly indicates that a particular condition or attribute is absent

- ^ the number of real negative cases in the data

- ^ A test result that correctly indicates the absence of a condition or characteristic

- ^ Type I error: A test result which wrongly indicates that a particular condition or attribute is present

ROC space

[edit]

The contingency table can derive several evaluation "metrics" (see infobox). To draw a ROC curve, only the true positive rate (TPR) and false positive rate (FPR) are needed (as functions of some classifier parameter). The TPR defines how many correct positive results occur among all positive samples available during the test. FPR, on the other hand, defines how many incorrect positive results occur among all negative samples available during the test.

A ROC space is defined by FPR and TPR as x and y axes, respectively, which depicts relative trade-offs between true positive (benefits) and false positive (costs). Since TPR is equivalent to sensitivity and FPR is equal to 1 − specificity, the ROC graph is sometimes called the sensitivity vs (1 − specificity) plot. Each prediction result or instance of a confusion matrix represents one point in the ROC space.

The best possible prediction method would yield a point in the upper left corner or coordinate (0,1) of the ROC space, representing 100% sensitivity (no false negatives) and 100% specificity (no false positives). The (0,1) point is also called a perfect classification. A random guess would give a point along a diagonal line (the so-called line of no-discrimination) from the bottom left to the top right corners (regardless of the positive and negative base rates).[16] An intuitive example of random guessing is a decision by flipping coins. As the size of the sample increases, a random classifier's ROC point tends towards the diagonal line. In the case of a balanced coin, it will tend to the point (0.5, 0.5).

The diagonal divides the ROC space. Points above the diagonal represent good classification results (better than random); points below the line represent bad results (worse than random). Note that the output of a consistently bad predictor could simply be inverted to obtain a good predictor.

Consider four prediction results from 100 positive and 100 negative instances:

| A | B | C | C′ | ||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

|

| ||||||||||||||||||||||||||||||||||||

| TPR = 0.63 | TPR = 0.77 | TPR = 0.24 | TPR = 0.76 | ||||||||||||||||||||||||||||||||||||

| FPR = 0.28 | FPR = 0.77 | FPR = 0.88 | FPR = 0.12 | ||||||||||||||||||||||||||||||||||||

| PPV = 0.69 | PPV = 0.50 | PPV = 0.21 | PPV = 0.86 | ||||||||||||||||||||||||||||||||||||

| F1 = 0.66 | F1 = 0.61 | F1 = 0.23 | F1 = 0.81 | ||||||||||||||||||||||||||||||||||||

| ACC = 0.68 | ACC = 0.50 | ACC = 0.18 | ACC = 0.82 |

Plots of the four results above in the ROC space are given in the figure. The result of method A clearly shows the best predictive power among A, B, and C. The result of B lies on the random guess line (the diagonal line), and it can be seen in the table that the accuracy of B is 50%. However, when C is mirrored across the center point (0.5,0.5), the resulting method C′ is even better than A. This mirrored method simply reverses the predictions of whatever method or test produced the C contingency table. Although the original C method has negative predictive power, simply reversing its decisions leads to a new predictive method C′ which has positive predictive power. When the C method predicts p or n, the C′ method would predict n or p, respectively. In this manner, the C′ test would perform the best. The closer a result from a contingency table is to the upper left corner, the better it predicts, but the distance from the random guess line in either direction is the best indicator of how much predictive power a method has. If the result is below the line (i.e. the method is worse than a random guess), all of the method's predictions must be reversed in order to utilize its power, thereby moving the result above the random guess line.

Curves in ROC space

[edit]

In binary classification, the class prediction for each instance is often made based on a continuous random variable , which is a "score" computed for the instance (e.g. the estimated probability in logistic regression). Given a threshold parameter , the instance is classified as "positive" if , and "negative" otherwise. follows a probability density if the instance actually belongs to class "positive", and if otherwise. Therefore, the true positive rate is given by and the false positive rate is given by . The ROC curve plots parametrically versus with as the varying parameter.

For example, imagine that the blood protein levels in diseased people and healthy people are normally distributed with means of 2 g/dL and 1 g/dL respectively. A medical test might measure the level of a certain protein in a blood sample and classify any number above a certain threshold as indicating disease. The experimenter can adjust the threshold (green vertical line in the figure), which will in turn change the false positive rate. Increasing the threshold would result in fewer false positives (and more false negatives), corresponding to a leftward movement on the curve. The actual shape of the curve is determined by how much overlap the two distributions have.

Criticisms

[edit]

Several studies criticize certain applications of the ROC curve and its area under the curve as measurements for assessing binary classifications when they do not capture the information relevant to the application.[18][17][19][20][21]

The main criticism to the ROC curve described in these studies regards the incorporation of areas with low sensitivity and low specificity (both lower than 0.5) for the calculation of the total area under the curve (AUC).,[19] as described in the plot on the right.

According to the authors of these studies, that portion of area under the curve (with low sensitivity and low specificity) regards confusion matrices where binary predictions obtain bad results, and therefore should not be included for the assessment of the overall performance. Moreover, that portion of AUC indicates a space with high or low confusion matrix threshold which is rarely of interest for scientists performing a binary classification in any field.[19]

Another criticism to the ROC and its area under the curve is that they say nothing about precision and negative predictive value.[17]

A high ROC AUC, such as 0.9 for example, might correspond to low values of precision and negative predictive value, such as 0.2 and 0.1 in the [0, 1] range. If one performed a binary classification, obtained an ROC AUC of 0.9 and decided to focus only on this metric, they might overoptimistically believe their binary test was excellent. However, if this person took a look at the values of precision and negative predictive value, they might discover their values are low.

The ROC AUC summarizes sensitivity and specificity, but does not inform regarding precision and negative predictive value.[17]

Further interpretations

[edit]Sometimes, the ROC is used to generate a summary statistic. Common versions are:

- the intercept of the ROC curve with the line at 45 degrees orthogonal to the no-discrimination line - the balance point where Sensitivity = Specificity

- the intercept of the ROC curve with the tangent at 45 degrees parallel to the no-discrimination line that is closest to the error-free point (0,1) – also called Youden's J statistic and generalized as Informedness[citation needed]

- the area between the ROC curve and the no-discrimination line multiplied by two is called the Gini coefficient, especially in the context of credit scoring.[22] It should not be confused with the measure of statistical dispersion also called Gini coefficient.

- the area between the full ROC curve and the triangular ROC curve including only (0,0), (1,1) and one selected operating point – Consistency[23]

- the area under the ROC curve, or "AUC" ("area under curve"), or A' (pronounced "a-prime"),[24] or "c-statistic" ("concordance statistic").[25]

- the sensitivity index d′ (pronounced "d-prime"), the distance between the mean of the distribution of activity in the system under noise-alone conditions and its distribution under signal-alone conditions, divided by their standard deviation, under the assumption that both these distributions are normal with the same standard deviation. Under these assumptions, the shape of the ROC is entirely determined by d′.

However, any attempt to summarize the ROC curve into a single number loses information about the pattern of tradeoffs of the particular discriminator algorithm.

Probabilistic interpretation

[edit]The area under the curve (often referred to as simply the AUC) is equal to the probability that a classifier will rank a randomly chosen positive instance higher than a randomly chosen negative one (assuming 'positive' ranks higher than 'negative').[26] In other words, when given one randomly selected positive instance and one randomly selected negative instance, AUC is the probability that the classifier will be able to tell which one is which.

This can be seen as follows: the area under the curve is given by (the integral boundaries are reversed as large threshold has a lower value on the x-axis)

where is the score for a positive instance and is the score for a negative instance, and and are probability densities as defined in previous section.

Area under the curve

[edit]It can be shown that the AUC is closely related to the Mann–Whitney U,[27][28] which tests whether positives are ranked higher than negatives. For a predictor , an unbiased estimator of its AUC can be expressed by the following Wilcoxon-Mann-Whitney statistic:[29]

where denotes an indicator function which returns 1 if otherwise return 0; is the set of negative examples, and is the set of positive examples.

In the context of credit scoring, a rescaled version of AUC is often used:

.

is referred to as Gini index or Gini coefficient,[30] but it should not be confused with the measure of statistical dispersion that is also called Gini coefficient. is a special case of Somers' D.

It is also common to calculate the Area Under the ROC Convex Hull (ROC AUCH = ROCH AUC) as any point on the line segment between two prediction results can be achieved by randomly using one or the other system with probabilities proportional to the relative length of the opposite component of the segment.[31] It is also possible to invert concavities – just as in the figure the worse solution can be reflected to become a better solution; concavities can be reflected in any line segment, but this more extreme form of fusion is much more likely to overfit the data.[32]

The machine learning community most often uses the ROC AUC statistic for model comparison.[33] This practice has been questioned because AUC estimates are quite noisy and suffer from other problems.[34][35][36] Nonetheless, the coherence of AUC as a measure of aggregated classification performance has been vindicated, in terms of a uniform rate distribution,[37] and AUC has been linked to a number of other performance metrics such as the Brier score.[38]

Another problem with ROC AUC is that reducing the ROC Curve to a single number ignores the fact that it is about the tradeoffs between the different systems or performance points plotted and not the performance of an individual system, as well as ignoring the possibility of concavity repair, so that related alternative measures such as Informedness[citation needed] or DeltaP are recommended.[23][39] These measures are essentially equivalent to the Gini for a single prediction point with DeltaP' = Informedness = 2AUC-1, whilst DeltaP = Markedness represents the dual (viz. predicting the prediction from the real class) and their geometric mean is the Matthews correlation coefficient.[citation needed]

Whereas ROC AUC varies between 0 and 1 — with an uninformative classifier yielding 0.5 — the alternative measures known as Informedness,[citation needed] Certainty [23] and Gini Coefficient (in the single parameterization or single system case)[citation needed] all have the advantage that 0 represents chance performance whilst 1 represents perfect performance, and −1 represents the "perverse" case of full informedness always giving the wrong response.[40] Bringing chance performance to 0 allows these alternative scales to be interpreted as Kappa statistics. Informedness has been shown to have desirable characteristics for Machine Learning versus other common definitions of Kappa such as Cohen Kappa and Fleiss Kappa.[citation needed][41]

Sometimes it can be more useful to look at a specific region of the ROC Curve rather than at the whole curve. It is possible to compute partial AUC.[42] For example, one could focus on the region of the curve with low false positive rate, which is often of prime interest for population screening tests.[43] Another common approach for classification problems in which P ≪ N (common in bioinformatics applications) is to use a logarithmic scale for the x-axis.[44]

The ROC area under the curve is also called c-statistic or c statistic.[45]

Other measures

[edit]

The Total Operating Characteristic (TOC) also characterizes diagnostic ability while revealing more information than the ROC. For each threshold, ROC reveals two ratios, TP/(TP + FN) and FP/(FP + TN). In other words, ROC reveals and . On the other hand, TOC shows the total information in the contingency table for each threshold.[46] The TOC method reveals all of the information that the ROC method provides, plus additional important information that ROC does not reveal, i.e. the size of every entry in the contingency table for each threshold. TOC also provides the popular AUC of the ROC.[47]

These figures are the TOC and ROC curves using the same data and thresholds. Consider the point that corresponds to a threshold of 74. The TOC curve shows the number of hits, which is 3, and hence the number of misses, which is 7. Additionally, the TOC curve shows that the number of false alarms is 4 and the number of correct rejections is 16. At any given point in the ROC curve, it is possible to glean values for the ratios of and . For example, at threshold 74, it is evident that the x coordinate is 0.2 and the y coordinate is 0.3. However, these two values are insufficient to construct all entries of the underlying two-by-two contingency table.

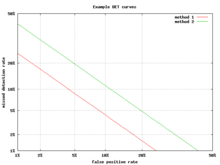

Detection error tradeoff graph

[edit]

An alternative to the ROC curve is the detection error tradeoff (DET) graph, which plots the false negative rate (missed detections) vs. the false positive rate (false alarms) on non-linearly transformed x- and y-axes. The transformation function is the quantile function of the normal distribution, i.e., the inverse of the cumulative normal distribution. It is, in fact, the same transformation as zROC, below, except that the complement of the hit rate, the miss rate or false negative rate, is used. This alternative spends more graph area on the region of interest. Most of the ROC area is of little interest; one primarily cares about the region tight against the y-axis and the top left corner – which, because of using miss rate instead of its complement, the hit rate, is the lower left corner in a DET plot. Furthermore, DET graphs have the useful property of linearity and a linear threshold behavior for normal distributions.[48] The DET plot is used extensively in the automatic speaker recognition community, where the name DET was first used. The analysis of the ROC performance in graphs with this warping of the axes was used by psychologists in perception studies halfway through the 20th century,[citation needed] where this was dubbed "double probability paper".[49]

Z-score

[edit]If a standard score is applied to the ROC curve, the curve will be transformed into a straight line.[50] This z-score is based on a normal distribution with a mean of zero and a standard deviation of one. In memory strength theory, one must assume that the zROC is not only linear, but has a slope of 1.0. The normal distributions of targets (studied objects that the subjects need to recall) and lures (non studied objects that the subjects attempt to recall) is the factor causing the zROC to be linear.

The linearity of the zROC curve depends on the standard deviations of the target and lure strength distributions. If the standard deviations are equal, the slope will be 1.0. If the standard deviation of the target strength distribution is larger than the standard deviation of the lure strength distribution, then the slope will be smaller than 1.0. In most studies, it has been found that the zROC curve slopes constantly fall below 1, usually between 0.5 and 0.9.[51] Many experiments yielded a zROC slope of 0.8. A slope of 0.8 implies that the variability of the target strength distribution is 25% larger than the variability of the lure strength distribution.[52]

Another variable used is d' (d prime) (discussed above in "Other measures"), which can easily be expressed in terms of z-values. Although d' is a commonly used parameter, it must be recognized that it is only relevant when strictly adhering to the very strong assumptions of strength theory made above.[53]

The z-score of an ROC curve is always linear, as assumed, except in special situations. The Yonelinas familiarity-recollection model is a two-dimensional account of recognition memory. Instead of the subject simply answering yes or no to a specific input, the subject gives the input a feeling of familiarity, which operates like the original ROC curve. What changes, though, is a parameter for Recollection (R). Recollection is assumed to be all-or-none, and it trumps familiarity. If there were no recollection component, zROC would have a predicted slope of 1. However, when adding the recollection component, the zROC curve will be concave up, with a decreased slope. This difference in shape and slope result from an added element of variability due to some items being recollected. Patients with anterograde amnesia are unable to recollect, so their Yonelinas zROC curve would have a slope close to 1.0.[54]

History

[edit]The ROC curve was first used during World War II for the analysis of radar signals before it was employed in signal detection theory.[55] Following the attack on Pearl Harbor in 1941, the United States military began new research to increase the prediction of correctly detected Japanese aircraft from their radar signals. For these purposes they measured the ability of a radar receiver operator to make these important distinctions, which was called the Receiver Operating Characteristic.[56]

In the 1950s, ROC curves were employed in psychophysics to assess human (and occasionally non-human animal) detection of weak signals.[55] In medicine, ROC analysis has been extensively used in the evaluation of diagnostic tests.[57][58] ROC curves are also used extensively in epidemiology and medical research and are frequently mentioned in conjunction with evidence-based medicine. In radiology, ROC analysis is a common technique to evaluate new radiology techniques.[59] In the social sciences, ROC analysis is often called the ROC Accuracy Ratio, a common technique for judging the accuracy of default probability models. ROC curves are widely used in laboratory medicine to assess the diagnostic accuracy of a test, to choose the optimal cut-off of a test and to compare diagnostic accuracy of several tests.

ROC curves also proved useful for the evaluation of machine learning techniques. The first application of ROC in machine learning was by Spackman who demonstrated the value of ROC curves in comparing and evaluating different classification algorithms.[60]

ROC curves are also used in verification of forecasts in meteorology.[61]

Radar in detail

[edit]As mentioned ROC curves are critical to radar operation and theory. The signals received at a receiver station, as reflected by a target, are often of very low energy, in comparison to the noise floor. The ratio of signal to noise is an important metric when determining if a target will be detected. This signal to noise ratio is directly correlated to the receiver operating characteristics of the whole radar system, which is used to quantify the ability of a radar system.

Consider the development of a radar system. A specification for the abilities of the system may be provided in terms of probability of detect, , with a certain tolerance for false alarms, . A simplified approximation of the required signal to noise ratio at the receiver station can be calculated by solving[62]

for the signal to noise ratio . Here, is not in decibels, as is common in many radar applications. Conversion to decibels is through . From this figure, the common entries in the radar range equation (with noise factors) may be solved, to estimate the required effective radiated power.

ROC curves beyond binary classification

[edit]The extension of ROC curves for classification problems with more than two classes is cumbersome. Two common approaches for when there are multiple classes are (1) average over all pairwise AUC values[63] and (2) compute the volume under surface (VUS).[64][65] To average over all pairwise classes, one computes the AUC for each pair of classes, using only the examples from those two classes as if there were no other classes, and then averages these AUC values over all possible pairs. When there are c classes there will be c(c − 1) / 2 possible pairs of classes.

The volume under surface approach has one plot a hypersurface rather than a curve and then measure the hypervolume under that hypersurface. Every possible decision rule that one might use for a classifier for c classes can be described in terms of its true positive rates (TPR1, . . . , TPRc). It is this set of rates that defines a point, and the set of all possible decision rules yields a cloud of points that define the hypersurface. With this definition, the VUS is the probability that the classifier will be able to correctly label all c examples when it is given a set that has one randomly selected example from each class. The implementation of a classifier that knows that its input set consists of one example from each class might first compute a goodness-of-fit score for each of the c2 possible pairings of an example to a class, and then employ the Hungarian algorithm to maximize the sum of the c selected scores over all c! possible ways to assign exactly one example to each class.

Given the success of ROC curves for the assessment of classification models, the extension of ROC curves for other supervised tasks has also been investigated. Notable proposals for regression problems are the so-called regression error characteristic (REC) Curves [66] and the Regression ROC (RROC) curves.[67] In the latter, RROC curves become extremely similar to ROC curves for classification, with the notions of asymmetry, dominance and convex hull. Also, the area under RROC curves is proportional to the error variance of the regression model.

See also

[edit]References

[edit]- ^ a b "Detector Performance Analysis Using ROC Curves - MATLAB & Simulink Example". www.mathworks.com. Retrieved 11 August 2016.

- ^ Swets, John A.; Signal detection theory and ROC analysis in psychology and diagnostics : collected papers, Lawrence Erlbaum Associates, Mahwah, NJ, 1996

- ^ Junge, M. R.; Dettori, J. R. (May 3, 2024). "ROC Solid: Receiver Operator Characteristic (ROC) Curves as a Foundation for Better Diagnostic Tests". Global Spine Journal. 8 (4): 424–429. doi:10.1177/2192568218778294. PMC 6022965. PMID 29977728.

- ^ Peres, D. J.; Cancelliere, A. (2014-12-08). "Derivation and evaluation of landslide-triggering thresholds by a Monte Carlo approach". Hydrol. Earth Syst. Sci. 18 (12): 4913–4931. Bibcode:2014HESS...18.4913P. doi:10.5194/hess-18-4913-2014. ISSN 1607-7938.

- ^ Murphy, Allan H. (1996-03-01). "The Finley Affair: A Signal Event in the History of Forecast Verification". Weather and Forecasting. 11 (1): 3–20. Bibcode:1996WtFor..11....3M. doi:10.1175/1520-0434(1996)011<0003:tfaase>2.0.co;2. ISSN 0882-8156.

- ^ Peres, D. J.; Iuppa, C.; Cavallaro, L.; Cancelliere, A.; Foti, E. (2015-10-01). "Significant wave height record extension by neural networks and reanalysis wind data". Ocean Modelling. 94: 128–140. Bibcode:2015OcMod..94..128P. doi:10.1016/j.ocemod.2015.08.002.

- ^ Sushkova, Olga; Morozov, Alexei; Gabova, Alexandra; Karabanov, Alexei; Illarioshkin, Sergey (2021). "A Statistical Method for Exploratory Data Analysis Based on 2D and 3D Area under Curve Diagrams: Parkinson's Disease Investigation". Sensors. 21 (14): 4700. Bibcode:2021Senso..21.4700S. doi:10.3390/s21144700. PMC 8309570. PMID 34300440.

- ^ Fawcett, Tom (2006). "An Introduction to ROC Analysis" (PDF). Pattern Recognition Letters. 27 (8): 861–874. doi:10.1016/j.patrec.2005.10.010. S2CID 2027090.

- ^ Provost, Foster; Tom Fawcett (2013-08-01). "Data Science for Business: What You Need to Know about Data Mining and Data-Analytic Thinking". O'Reilly Media, Inc.

- ^ Powers, David M. W. (2011). "Evaluation: From Precision, Recall and F-Measure to ROC, Informedness, Markedness & Correlation". Journal of Machine Learning Technologies. 2 (1): 37–63.

- ^ Ting, Kai Ming (2011). Sammut, Claude; Webb, Geoffrey I. (eds.). Encyclopedia of machine learning. Springer. doi:10.1007/978-0-387-30164-8. ISBN 978-0-387-30164-8.

- ^ Brooks, Harold; Brown, Barb; Ebert, Beth; Ferro, Chris; Jolliffe, Ian; Koh, Tieh-Yong; Roebber, Paul; Stephenson, David (2015-01-26). "WWRP/WGNE Joint Working Group on Forecast Verification Research". Collaboration for Australian Weather and Climate Research. World Meteorological Organisation. Retrieved 2019-07-17.

- ^ Chicco D, Jurman G (January 2020). "The advantages of the Matthews correlation coefficient (MCC) over F1 score and accuracy in binary classification evaluation". BMC Genomics. 21 (1): 6-1–6-13. doi:10.1186/s12864-019-6413-7. PMC 6941312. PMID 31898477.

- ^ Chicco D, Toetsch N, Jurman G (February 2021). "The Matthews correlation coefficient (MCC) is more reliable than balanced accuracy, bookmaker informedness, and markedness in two-class confusion matrix evaluation". BioData Mining. 14 (13): 13. doi:10.1186/s13040-021-00244-z. PMC 7863449. PMID 33541410.

- ^ Tharwat A. (August 2018). "Classification assessment methods". Applied Computing and Informatics. 17: 168–192. doi:10.1016/j.aci.2018.08.003.

- ^ "classification - AUC-ROC of a random classifier". Data Science Stack Exchange. Retrieved 2020-11-30.

- ^ a b c d Chicco, Davide; Jurman, Giuseppe (2023-02-17). "The Matthews correlation coefficient (MCC) should replace the ROC AUC as the standard metric for assessing binary classification". BioData Mining. 16 (1). Springer Science and Business Media LLC: 4. doi:10.1186/s13040-023-00322-4. hdl:10281/430042. ISSN 1756-0381. PMC 9938573. PMID 36800973.

- ^ Muschelli, John (2019-12-23). "ROC and AUC with a binary predictor: a potentially misleading metric". Journal of Classification. 37 (3). Springer Science and Business Media LLC: 696–708. doi:10.1007/s00357-019-09345-1. ISSN 0176-4268. PMC 7695228. PMID 33250548.

- ^ a b c Lobo, Jorge M.; Jiménez-Valverde, Alberto; Real, Raimundo (2008). "AUC: a misleading measure of the performance of predictive distribution models". Global Ecology and Biogeography. 17 (2). Wiley: 145–151. doi:10.1111/j.1466-8238.2007.00358.x. ISSN 1466-822X.

- ^ Halligan, Steve; Altman, Douglas G.; Mallett, Susan (2015-01-20). "Disadvantages of using the area under the receiver operating characteristic curve to assess imaging tests: A discussion and proposal for an alternative approach". European Radiology. 25 (4). Springer Science and Business Media LLC: 932–939. doi:10.1007/s00330-014-3487-0. ISSN 0938-7994. PMC 4356897. PMID 25599932.

- ^ Berrar, D.; Flach, P. (2011-03-21). "Caveats and pitfalls of ROC analysis in clinical microarray research (and how to avoid them)". Briefings in Bioinformatics. 13 (1). Oxford University Press (OUP): 83–97. doi:10.1093/bib/bbr008. ISSN 1467-5463.

- ^ Řezáč, M., Řezáč, F. (2011). "How to Measure the Quality of Credit Scoring Models". Czech Journal of Economics and Finance (Finance a úvěr). 61 (5). Charles University Prague, Faculty of Social Sciences: 486–507.

- ^ a b c Powers, David MW (2012). "ROC-ConCert: ROC-Based Measurement of Consistency and Certainty" (PDF). Spring Congress on Engineering and Technology (SCET). Vol. 2. IEEE. pp. 238–241.[dead link]

- ^ Fogarty, James; Baker, Ryan S.; Hudson, Scott E. (2005). "Case studies in the use of ROC curve analysis for sensor-based estimates in human computer interaction". ACM International Conference Proceeding Series, Proceedings of Graphics Interface 2005. Waterloo, ON: Canadian Human-Computer Communications Society.

- ^ Hastie, Trevor; Tibshirani, Robert; Friedman, Jerome H. (2009). The elements of statistical learning: data mining, inference, and prediction (2nd ed.).

- ^ Fawcett, Tom (2006); An introduction to ROC analysis, Pattern Recognition Letters, 27, 861–874.

- ^ Hanley, James A.; McNeil, Barbara J. (1982). "The Meaning and Use of the Area under a Receiver Operating Characteristic (ROC) Curve". Radiology. 143 (1): 29–36. doi:10.1148/radiology.143.1.7063747. PMID 7063747. S2CID 10511727.

- ^ Mason, Simon J.; Graham, Nicholas E. (2002). "Areas beneath the relative operating characteristics (ROC) and relative operating levels (ROL) curves: Statistical significance and interpretation" (PDF). Quarterly Journal of the Royal Meteorological Society. 128 (584): 2145–2166. Bibcode:2002QJRMS.128.2145M. CiteSeerX 10.1.1.458.8392. doi:10.1256/003590002320603584. S2CID 121841664. Archived from the original (PDF) on 2008-11-20.

- ^ Calders, Toon; Jaroszewicz, Szymon (2007). "Efficient AUC Optimization for Classification". In Kok, Joost N.; Koronacki, Jacek; Lopez de Mantaras, Ramon; Matwin, Stan; Mladenič, Dunja; Skowron, Andrzej (eds.). Knowledge Discovery in Databases: PKDD 2007. Lecture Notes in Computer Science. Vol. 4702. Berlin, Heidelberg: Springer. pp. 42–53. doi:10.1007/978-3-540-74976-9_8. ISBN 978-3-540-74976-9.

- ^ Hand, David J.; and Till, Robert J. (2001); A simple generalization of the area under the ROC curve for multiple class classification problems, Machine Learning, 45, 171–186.

- ^ Provost, F.; Fawcett, T. (2001). "Robust classification for imprecise environments". Machine Learning. 42 (3): 203–231. arXiv:cs/0009007. doi:10.1023/a:1007601015854. S2CID 5415722.

- ^ Flach, P.A.; Wu, S. (2005). "Repairing concavities in ROC curves." (PDF). 19th International Joint Conference on Artificial Intelligence (IJCAI'05). pp. 702–707.

- ^ Hanley, James A.; McNeil, Barbara J. (1983-09-01). "A method of comparing the areas under receiver operating characteristic curves derived from the same cases". Radiology. 148 (3): 839–843. doi:10.1148/radiology.148.3.6878708. PMID 6878708.

- ^ Hanczar, Blaise; Hua, Jianping; Sima, Chao; Weinstein, John; Bittner, Michael; Dougherty, Edward R (2010). "Small-sample precision of ROC-related estimates". Bioinformatics. 26 (6): 822–830. doi:10.1093/bioinformatics/btq037. PMID 20130029.

- ^ Lobo, Jorge M.; Jiménez-Valverde, Alberto; Real, Raimundo (2008). "AUC: a misleading measure of the performance of predictive distribution models". Global Ecology and Biogeography. 17 (2): 145–151. doi:10.1111/j.1466-8238.2007.00358.x. S2CID 15206363.

- ^ Hand, David J (2009). "Measuring classifier performance: A coherent alternative to the area under the ROC curve". Machine Learning. 77: 103–123. doi:10.1007/s10994-009-5119-5. hdl:10044/1/18420.

- ^ Flach, P.A.; Hernandez-Orallo, J.; Ferri, C. (2011). "A coherent interpretation of AUC as a measure of aggregated classification performance." (PDF). Proceedings of the 28th International Conference on Machine Learning (ICML-11). pp. 657–664.

- ^ Hernandez-Orallo, J.; Flach, P.A.; Ferri, C. (2012). "A unified view of performance metrics: translating threshold choice into expected classification loss" (PDF). Journal of Machine Learning Research. 13: 2813–2869.

- ^ Powers, David M.W. (2012). "The Problem of Area Under the Curve". International Conference on Information Science and Technology.

- ^ Powers, David M. W. (2003). "Recall and Precision versus the Bookmaker" (PDF). Proceedings of the International Conference on Cognitive Science (ICSC-2003), Sydney Australia, 2003, pp. 529–534.

- ^ Powers, David M. W. (2012). "The Problem with Kappa" (PDF). Conference of the European Chapter of the Association for Computational Linguistics (EACL2012) Joint ROBUS-UNSUP Workshop. Archived from the original (PDF) on 2016-05-18. Retrieved 2012-07-20.

- ^ McClish, Donna Katzman (1989-08-01). "Analyzing a Portion of the ROC Curve". Medical Decision Making. 9 (3): 190–195. doi:10.1177/0272989X8900900307. PMID 2668680. S2CID 24442201.

- ^ Dodd, Lori E.; Pepe, Margaret S. (2003). "Partial AUC Estimation and Regression". Biometrics. 59 (3): 614–623. doi:10.1111/1541-0420.00071. PMID 14601762. S2CID 23054670.

- ^ Karplus, Kevin (2011); Better than Chance: the importance of null models, University of California, Santa Cruz, in Proceedings of the First International Workshop on Pattern Recognition in Proteomics, Structural Biology and Bioinformatics (PR PS BB 2011)

- ^ "C-Statistic: Definition, Examples, Weighting and Significance". Statistics How To. August 28, 2016.

- ^ Pontius, Robert Gilmore; Parmentier, Benoit (2014). "Recommendations for using the Relative Operating Characteristic (ROC)". Landscape Ecology. 29 (3): 367–382. doi:10.1007/s10980-013-9984-8. S2CID 15924380.

- ^ Pontius, Robert Gilmore; Si, Kangping (2014). "The total operating characteristic to measure diagnostic ability for multiple thresholds". International Journal of Geographical Information Science. 28 (3): 570–583. doi:10.1080/13658816.2013.862623. S2CID 29204880.

- ^ Navratil, J.; Klusacek, D. (2007-04-01). "On Linear DETs". 2007 IEEE International Conference on Acoustics, Speech and Signal Processing - ICASSP '07. Vol. 4. pp. IV–229–IV–232. doi:10.1109/ICASSP.2007.367205. ISBN 978-1-4244-0727-9. S2CID 18173315.

- ^ Dev P. Chakraborty (December 14, 2017). "double+probability+paper"&pg=PT214 Observer Performance Methods for Diagnostic Imaging: Foundations, Modeling, and Applications with R-Based Examples. CRC Press. p. 214. ISBN 9781351230711. Retrieved July 11, 2019.

- ^ MacMillan, Neil A.; Creelman, C. Douglas (2005). Detection Theory: A User's Guide (2nd ed.). Mahwah, NJ: Lawrence Erlbaum Associates. ISBN 978-1-4106-1114-7.

- ^ Glanzer, Murray; Kisok, Kim; Hilford, Andy; Adams, John K. (1999). "Slope of the receiver-operating characteristic in recognition memory". Journal of Experimental Psychology: Learning, Memory, and Cognition. 25 (2): 500–513. doi:10.1037/0278-7393.25.2.500.

- ^ Ratcliff, Roger; McCoon, Gail; Tindall, Michael (1994). "Empirical generality of data from recognition memory ROC functions and implications for GMMs". Journal of Experimental Psychology: Learning, Memory, and Cognition. 20 (4): 763–785. CiteSeerX 10.1.1.410.2114. doi:10.1037/0278-7393.20.4.763. PMID 8064246.

- ^ Zhang, Jun; Mueller, Shane T. (2005). "A note on ROC analysis and non-parametric estimate of sensitivity". Psychometrika. 70: 203–212. CiteSeerX 10.1.1.162.1515. doi:10.1007/s11336-003-1119-8. S2CID 122355230.

- ^ Yonelinas, Andrew P.; Kroll, Neal E. A.; Dobbins, Ian G.; Lazzara, Michele; Knight, Robert T. (1998). "Recollection and familiarity deficits in amnesia: Convergence of remember-know, process dissociation, and receiver operating characteristic data". Neuropsychology. 12 (3): 323–339. doi:10.1037/0894-4105.12.3.323. PMID 9673991.

- ^ a b Green, David M.; Swets, John A. (1966). Signal detection theory and psychophysics. New York, NY: John Wiley and Sons Inc. ISBN 978-0-471-32420-1.

- ^ "Using the Receiver Operating Characteristic (ROC) curve to analyze a classification model: A final note of historical interest" (PDF). Department of Mathematics, University of Utah. Archived (PDF) from the original on 2020-08-22. Retrieved May 25, 2017.

- ^ Zweig, Mark H.; Campbell, Gregory (1993). "Receiver-operating characteristic (ROC) plots: a fundamental evaluation tool in clinical medicine" (PDF). Clinical Chemistry. 39 (8): 561–577. doi:10.1093/clinchem/39.4.561. PMID 8472349.

- ^ Pepe, Margaret S. (2003). The statistical evaluation of medical tests for classification and prediction. New York, NY: Oxford. ISBN 978-0-19-856582-6.

- ^ Obuchowski, Nancy A. (2003). "Receiver operating characteristic curves and their use in radiology". Radiology. 229 (1): 3–8. doi:10.1148/radiol.2291010898. PMID 14519861.

- ^ Spackman, Kent A. (1989). "Signal detection theory: Valuable tools for evaluating inductive learning". Proceedings of the Sixth International Workshop on Machine Learning. San Mateo, CA: Morgan Kaufmann. pp. 160–163.

- ^ Kharin, Viatcheslav (2003). "On the ROC score of probability forecasts". Journal of Climate. 16 (24): 4145–4150. Bibcode:2003JCli...16.4145K. doi:10.1175/1520-0442(2003)016<4145:OTRSOP>2.0.CO;2.

- ^ "Fundamentals of Radar", Digital Signal Processing Techniques and Applications in Radar Image Processing, Hoboken, NJ, USA: John Wiley & Sons, Inc., pp. 93–115, 2008-01-29, doi:10.1002/9780470377765.ch4, ISBN 9780470377765, retrieved 2023-05-20

- ^ Till, D.J.; Hand, R.J. (2001). "A Simple Generalisation of the Area Under the ROC Curve for Multiple Class Classification Problems". Machine Learning. 45 (2): 171–186. doi:10.1023/A:1010920819831.

- ^ Mossman, D. (1999). "Three-way ROCs". Medical Decision Making. 19 (1): 78–89. doi:10.1177/0272989x9901900110. PMID 9917023. S2CID 24623127.

- ^ Ferri, C.; Hernandez-Orallo, J.; Salido, M.A. (2003). "Volume under the ROC Surface for Multi-class Problems". Machine Learning: ECML 2003. pp. 108–120.

- ^ Bi, J.; Bennett, K.P. (2003). "Regression error characteristic curves" (PDF). Twentieth International Conference on Machine Learning (ICML-2003). Washington, DC.

- ^ Hernandez-Orallo, J. (2013). "ROC curves for regression". Pattern Recognition. 46 (12): 3395–3411. Bibcode:2013PatRe..46.3395H. doi:10.1016/j.patcog.2013.06.014. hdl:10251/40252. S2CID 15651724.

External links

[edit]- Animated ROC demo

- ROC demo

- another ROC demo

- ROC video explanation

- An Introduction to the Total Operating Characteristic: Utility in Land Change Model Evaluation

- How to run the TOC Package in R

- TOC R package on Github

- Excel Workbook for generating TOC curves

Further reading

[edit]- Balakrishnan, Narayanaswamy (1991); Handbook of the Logistic Distribution, Marcel Dekker, Inc., ISBN 978-0-8247-8587-1

- Brown, Christopher D.; Davis, Herbert T. (2006). "Receiver operating characteristic curves and related decision measures: a tutorial". Chemometrics and Intelligent Laboratory Systems. 80: 24–38. doi:10.1016/j.chemolab.2005.05.004.

- Rotello, Caren M.; Heit, Evan; Dubé, Chad (2014). "When more data steer us wrong: replications with the wrong dependent measure perpetuate erroneous conclusions" (PDF). Psychonomic Bulletin & Review. 22 (4): 944–954. doi:10.3758/s13423-014-0759-2. PMID 25384892. S2CID 6046065.

- Fawcett, Tom (2004). "ROC Graphs: Notes and Practical Considerations for Researchers" (PDF). Pattern Recognition Letters. 27 (8): 882–891. CiteSeerX 10.1.1.145.4649. doi:10.1016/j.patrec.2005.10.012.

- Gonen, Mithat (2007); Analyzing Receiver Operating Characteristic Curves Using SAS, SAS Press, ISBN 978-1-59994-298-8

- Green, William H., (2003) Econometric Analysis, fifth edition, Prentice Hall, ISBN 0-13-066189-9

- Heagerty, Patrick J.; Lumley, Thomas; Pepe, Margaret S. (2000). "Time-dependent ROC Curves for Censored Survival Data and a Diagnostic Marker". Biometrics. 56 (2): 337–344. doi:10.1111/j.0006-341x.2000.00337.x. PMID 10877287. S2CID 8822160.

- Hosmer, David W.; and Lemeshow, Stanley (2000); Applied Logistic Regression, 2nd ed., New York, NY: Wiley, ISBN 0-471-35632-8

- Lasko, Thomas A.; Bhagwat, Jui G.; Zou, Kelly H.; Ohno-Machado, Lucila (2005). "The use of receiver operating characteristic curves in biomedical informatics". Journal of Biomedical Informatics. 38 (5): 404–415. CiteSeerX 10.1.1.97.9674. doi:10.1016/j.jbi.2005.02.008. PMID 16198999.

- Mas, Jean-François; Filho, Britaldo Soares; Pontius, Jr, Robert Gilmore; Gutiérrez, Michelle Farfán; Rodrigues, Hermann (2013). "A suite of tools for ROC analysis of spatial models". ISPRS International Journal of Geo-Information. 2 (3): 869–887. Bibcode:2013IJGI....2..869M. doi:10.3390/ijgi2030869.

((cite journal)): CS1 maint: multiple names: authors list (link) - Pontius, Jr, Robert Gilmore; Parmentier, Benoit (2014). "Recommendations for using the Relative Operating Characteristic (ROC)". Landscape Ecology. 29 (3): 367–382. doi:10.1007/s10980-013-9984-8. S2CID 15924380.

((cite journal)): CS1 maint: multiple names: authors list (link) - Pontius, Jr, Robert Gilmore; Pacheco, Pablo (2004). "Calibration and validation of a model of forest disturbance in the Western Ghats, India 1920–1990". GeoJournal. 61 (4): 325–334. doi:10.1007/s10708-004-5049-5. S2CID 155073463.

((cite journal)): CS1 maint: multiple names: authors list (link) - Pontius, Jr, Robert Gilmore; Batchu, Kiran (2003). "Using the relative operating characteristic to quantify certainty in prediction of location of land cover change in India". Transactions in GIS. 7 (4): 467–484. doi:10.1111/1467-9671.00159. S2CID 14452746.

((cite journal)): CS1 maint: multiple names: authors list (link) - Pontius, Jr, Robert Gilmore; Schneider, Laura (2001). "Land-use change model validation by a ROC method for the Ipswich watershed, Massachusetts, USA". Agriculture, Ecosystems & Environment. 85 (1–3): 239–248. doi:10.1016/S0167-8809(01)00187-6.

((cite journal)): CS1 maint: multiple names: authors list (link) - Stephan, Carsten; Wesseling, Sebastian; Schink, Tania; Jung, Klaus (2003). "Comparison of Eight Computer Programs for Receiver-Operating Characteristic Analysis". Clinical Chemistry. 49 (3): 433–439. doi:10.1373/49.3.433. PMID 12600955.

- Swets, John A.; Dawes, Robyn M.; and Monahan, John (2000); Better Decisions through Science, Scientific American, October, pp. 82–87

- Zou, Kelly H.; O'Malley, A. James; Mauri, Laura (2007). "Receiver-operating characteristic analysis for evaluating diagnostic tests and predictive models". Circulation. 115 (5): 654–7. doi:10.1161/circulationaha.105.594929. PMID 17283280.

- Zhou, Xiao-Hua; Obuchowski, Nancy A.; McClish, Donna K. (2002). Statistical Methods in Diagnostic Medicine. New York, NY: Wiley & Sons. ISBN 978-0-471-34772-9.

- Chicco D.; Jurman G. (2023). "The Matthews correlation coefficient (MCC) should replace the ROC AUC as the standard metric for assessing binary classification". BioData Mining. 16 (1): 4. doi:10.1186/s13040-023-00322-4. PMC 9938573. PMID 36800973.

Machine learning evaluation metrics | |

|---|---|

| Regression | |

| Classification | |

| Clustering | |

| Ranking | |

| Computer Vision | |

| NLP | |

| Deep Learning Related Metrics | |

| Recommender system |

|

| Similarity | |

| General |

| ||||||

|---|---|---|---|---|---|---|---|

| Preventive healthcare | |||||||

| Population health | |||||||

| Biological and epidemiological statistics | |||||||

| Infectious and epidemic disease prevention | |||||||

| Food hygiene and safety management | |||||||

| Health behavioral sciences | |||||||

| Organizations, education and history |

| ||||||

![{\displaystyle {\begin{aligned}A&=\int _{x=0}^{1}{\mbox{TPR))({\mbox{FPR))^{-1}(x))\,dx\\[5pt]&=\int _{\infty }^{-\infty }{\mbox{TPR))(T){\mbox{FPR))'(T)\,dT\\[5pt]&=\int _{-\infty }^{\infty }\int _{-\infty }^{\infty }I(T'\geq T)f_{1}(T')f_{0}(T)\,dT'\,dT=P(X_{1}\geq X_{0})\end{aligned))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cdb0372c3b4aa60b02b19360ef1fbf8ddd8d221e)

![{\displaystyle {\text{AUC))(f)={\frac {\sum _{t_{0}\in {\mathcal {D))^{0))\sum _{t_{1}\in {\mathcal {D))^{1)){\textbf {1))[f(t_{0})<f(t_{1})]}{|{\mathcal {D))^{0}|\cdot |{\mathcal {D))^{1}|)),}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0ad12d9aef8c040aea59bc20304b821fc9b99232)

![{\textstyle {\textbf {1))[f(t_{0})<f(t_{1})]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/03407a2c3018d99fb12703e2327bfbf84b9ce426)