Probability distribution

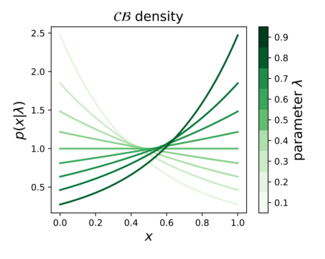

In probability theory, statistics, and machine learning, the continuous Bernoulli distribution[1][2][3] is a family of continuous probability distributions parameterized by a single shape parameter  , defined on the unit interval

, defined on the unit interval ![{\displaystyle x\in [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/64a15936df283add394ab909aa7a5e24e7fb6bb2) , by:

, by:

The continuous Bernoulli distribution arises in deep learning and computer vision, specifically in the context of variational autoencoders,[4][5] for modeling the pixel intensities of natural images. As such, it defines a proper probabilistic counterpart for the commonly used binary cross entropy loss, which is often applied to continuous, ![{\displaystyle [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d) -valued data.[6][7][8][9] This practice amounts to ignoring the normalizing constant of the continuous Bernoulli distribution, since the binary cross entropy loss only defines a true log-likelihood for discrete,

-valued data.[6][7][8][9] This practice amounts to ignoring the normalizing constant of the continuous Bernoulli distribution, since the binary cross entropy loss only defines a true log-likelihood for discrete,  -valued data.

-valued data.

The continuous Bernoulli also defines an exponential family of distributions. Writing  for the natural parameter, the density can be rewritten in canonical form:

for the natural parameter, the density can be rewritten in canonical form:

.

.

Related distributions

Bernoulli distribution

The continuous Bernoulli can be thought of as a continuous relaxation of the Bernoulli distribution, which is defined on the discrete set  by the probability mass function:

by the probability mass function:

where  is a scalar parameter between 0 and 1. Applying this same functional form on the continuous interval

is a scalar parameter between 0 and 1. Applying this same functional form on the continuous interval ![{\displaystyle [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d) results in the continuous Bernoulli probability density function, up to a normalizing constant.

results in the continuous Bernoulli probability density function, up to a normalizing constant.

Beta distribution

The Beta distribution has the density function:

which can be re-written as:

where  are positive scalar parameters, and

are positive scalar parameters, and  represents an arbitrary point inside the 1-simplex,

represents an arbitrary point inside the 1-simplex,  . Switching the role of the parameter and the argument in this density function, we obtain:

. Switching the role of the parameter and the argument in this density function, we obtain:

This family is only identifiable up to the linear constraint  , whence we obtain:

, whence we obtain:

corresponding exactly to the continuous Bernoulli density.

Exponential distribution

An exponential distribution restricted to the unit interval is equivalent to a continuous Bernoulli distribution with appropriate[which?] parameter.

Continuous categorical distribution

The multivariate generalization of the continuous Bernoulli is called the continuous-categorical.[10]

![{\displaystyle x\in [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/64a15936df283add394ab909aa7a5e24e7fb6bb2)

![{\displaystyle \operatorname {E} [X]={\begin{cases}{\frac {1}{2))&{\text{ if ))\lambda ={\frac {1}{2))\\{\frac {\lambda }{2\lambda -1))+{\frac {1}{2\tanh ^{-1}(1-2\lambda )))&{\text{ otherwise))\end{cases))\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c5b1e144bd124503572e7a88169f075486059f4c)

![{\displaystyle \operatorname {var} [X]={\begin{cases}{\frac {1}{12))&{\text{ if ))\lambda ={\frac {1}{2))\\-{\frac {(1-\lambda )\lambda }{(1-2\lambda )^{2))}+{\frac {1}{(2\tanh ^{-1}(1-2\lambda ))^{2))}&{\text{ otherwise))\end{cases))\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e3a107048a0d2239318adcede222d74b950cb95c)

![{\displaystyle [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d)