Family of continuous probability distributions

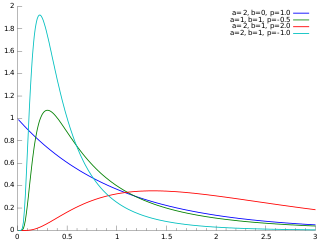

In probability theory and statistics, the generalized inverse Gaussian distribution (GIG) is a three-parameter family of continuous probability distributions with probability density function

where Kp is a modified Bessel function of the second kind, a > 0, b > 0 and p a real parameter. It is used extensively in geostatistics, statistical linguistics, finance, etc. This distribution was first proposed by Étienne Halphen.[1][2][3]

It was rediscovered and popularised by Ole Barndorff-Nielsen, who called it the generalized inverse Gaussian distribution. Its statistical properties are discussed in Bent Jørgensen's lecture notes.[4]

Related distributions

Special cases

The inverse Gaussian and gamma distributions are special cases of the generalized inverse Gaussian distribution for p = −1/2 and b = 0, respectively.[7] Specifically, an inverse Gaussian distribution of the form

![{\displaystyle f(x;\mu ,\lambda )=\left[{\frac {\lambda }{2\pi x^{3))}\right]^{1/2}\exp {\left({\frac {-\lambda (x-\mu )^{2)){2\mu ^{2}x))\right)))](https://wikimedia.org/api/rest_v1/media/math/render/svg/430c36a80c0f4de08f8b56fe7019d79e5d8aea68)

is a GIG with  ,

,  , and

, and  . A Gamma distribution of the form

. A Gamma distribution of the form

is a GIG with  ,

,  , and

, and  .

.

Other special cases include the inverse-gamma distribution, for a = 0.[7]

Conjugate prior for Gaussian

The GIG distribution is conjugate to the normal distribution when serving as the mixing distribution in a normal variance-mean mixture.[8][9] Let the prior distribution for some hidden variable, say  , be GIG:

, be GIG:

and let there be  observed data points,

observed data points,  , with normal likelihood function, conditioned on

, with normal likelihood function, conditioned on

where  is the normal distribution, with mean

is the normal distribution, with mean  and variance

and variance  . Then the posterior for

. Then the posterior for  , given the data is also GIG:

, given the data is also GIG:

where  .[note 1]

.[note 1]

Sichel distribution

The Sichel distribution[10][11] results when the GIG is used as the mixing distribution for the Poisson parameter  .

.

![{\displaystyle \operatorname {E} [x]={\frac ((\sqrt {b))\ K_{p+1}({\sqrt {ab)))}((\sqrt {a))\ K_{p}({\sqrt {ab)))))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9eea374ea6d263f4dec6f737248ac6c7ee9edec4)

![{\displaystyle \operatorname {E} [x^{-1}]={\frac ((\sqrt {a))\ K_{p+1}({\sqrt {ab)))}((\sqrt {b))\ K_{p}({\sqrt {ab)))))-{\frac {2p}{b))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4b8243064fe20b0eb33317bf58d29ad616bbd2ff)

![{\displaystyle \operatorname {E} [\ln x]=\ln {\frac {\sqrt {b)){\sqrt {a))}+{\frac {\partial }{\partial p))\ln K_{p}({\sqrt {ab)))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f49d3a11593033dcb22f9bf4f12377957b4ecc5b)

![{\displaystyle \left({\frac {b}{a))\right)\left[{\frac {K_{p+2}({\sqrt {ab)))}{K_{p}({\sqrt {ab)))))-\left({\frac {K_{p+1}({\sqrt {ab)))}{K_{p}({\sqrt {ab)))))\right)^{2}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fb650b320e436e5cc35f33ed94c0794e9f4c58ea)

![{\displaystyle {\begin{aligned}H={\frac {1}{2))\log \left({\frac {b}{a))\right)&{}+\log \left(2K_{p}\left({\sqrt {ab))\right)\right)-(p-1){\frac {\left[{\frac {d}{d\nu ))K_{\nu }\left({\sqrt {ab))\right)\right]_{\nu =p)){K_{p}\left({\sqrt {ab))\right)))\\&{}+{\frac {\sqrt {ab)){2K_{p}\left({\sqrt {ab))\right)))\left(K_{p+1}\left({\sqrt {ab))\right)+K_{p-1}\left({\sqrt {ab))\right)\right)\end{aligned))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d663373bdf483797c0ce4ada0238439389242a3)

![{\displaystyle \left[{\frac {d}{d\nu ))K_{\nu }\left({\sqrt {ab))\right)\right]_{\nu =p))](https://wikimedia.org/api/rest_v1/media/math/render/svg/3838a857c40b56dee47ed55dade77403f6657cb8)

![{\displaystyle f(x;\mu ,\lambda )=\left[{\frac {\lambda }{2\pi x^{3))}\right]^{1/2}\exp {\left({\frac {-\lambda (x-\mu )^{2)){2\mu ^{2}x))\right)))](https://wikimedia.org/api/rest_v1/media/math/render/svg/430c36a80c0f4de08f8b56fe7019d79e5d8aea68)